For SaaS (software as a service) companies, monitoring and managing their product data is crucial. For those who fail to understand this, by the time they notice an incident. Damage is already done. For struggling companies, this can be fatal.

To prevent this, I built an n8n workflow linked to their database that will analyze the data daily, spot if there is any incident. In this case, a log and notification system will start to investigate as soon as possible. I also built a dashboard so the team could see the results in real time.

Context

A B2B SaaS platform specializing in data visualization and automated reporting serves roughly 4500 customers, dispersed in three segments:

- Small business

- Mid-market

- Enterprise

The weekly product usage exceeds 30000 active accounts with strong dependencies on real-time data (pipelines, APIs, dashboards, background jobs).

The product team works closely with:

- Growth (acquisition, activation, onboarding)

- Revenue (pricing, ARPU, churn)

- SRE/Infrastructure (reliability, availability)

- Data Engineering (pipelines, data freshness)

- Support and customer success

Last year, the company observed a rising number of incidents. Between October and December, the total of incidents increased from 250 to 450, an 80% increase. With this increase, there were more than 45 High and critical incidents that affected thousands of users. The most affected metrics were:

- api_error_rate

- checkout_success_rate

- net_mrr_delta

- data_freshness_lag_minutes

- churn_rate

When incidents occur, a company is judged by its customers based on how it handles and reacts. While the product team is esteemed for how they managed it and ensured it won’t happen again.

Having an incident once can happen, but having the same incident twice is a fault.

Business impact

- More volatility in net recurring revenue

- A noticeable decline in active accounts over several consecutive weeks

- Multiple enterprise customers reporting and complaining about the outdated dashboard (up to 45+minutes late)

In total, between 30000 and 60000 users were impacted. Customer confidence in product reliability also suffered. Among non-renewals, 45% pointed out that’s their main reason.

Why is this issue critical?

As a data platform, the company cannot afford to have:

- slow or stale data

- Api error

- Pipeline failures

- Missed or delayed synchronizations

- Inaccurate dashboard

- Churns (downgrades, cancellations)

Internally, the incidents were spread across several systems:

- Notions for product tracking

- Slack for alerts

- PostgreSQL for storage

- Even on Google Sheets for customer support

There was not a single source of truth. The product team has to manually cross-reference and double-check all data, searching for trends and piecing together. It was an investigation and resolving a puzzle, making them lose so many hours weekly.

Solution: Automating an incident system alert with N8N and building a data dashboard. So, incidents are detected, tracked, resolved and understood.

Why n8n?

Currently, there are several automation platforms and solutions. But not all are matching the needs and requirements. Selecting the right one following the need is essential.

The specific requirements were to have access to a database without an API needed (n8n supports Api), to have visual workflows and nodes for a non-technical person to understand, custom-coded nodes, self-hosted options and cost-effective at scale. So, amongst the platforms existing like Zapier, Make or n8n, the choice was for the last.

Designing the Product Health Score

First, the key metrics need to be determined and calculated.

Impact score: simple function of severity + delta + scale of users

impact_score = (

severity_weights[severity] * 10

+ abs(delta_pct) * 0.8

+ np.log1p(affected_users)

)

impact_score = round(float(impact_score), 2)Priority: derived from severity + impact

if severity == "critical" or impact_score > 60:

priority = "P1"

elif severity == "high" or impact_score > 40:

priority = "P2"

elif severity == "medium":

priority = "P3"

else:

priority = "P4"Product health score

def compute_product_health_score(incidents, metrics):

"""

Score = 100 - sum(penalties)

Production version handles 15+ factors

"""

# Key insight: penalties have different max weights

penalties = {

'volume': min(40, incident_rate * 13), # 40% max

'severity': calculate_severity_sum(incidents), # 25% max

'users': min(15, log(users) / log(50000) * 15), # 15% max

'trends': calculate_business_trends(metrics) # 20% max

}

score = 100 - sum(penalties.values())

if score >= 80: return score, "🟢 Stable"

elif score >= 60: return score, "🟡 Under watch"

else: return score, "🔴 At risk"Designing the Automated Detection System with n8n

This system is composed of 4 streams:

- Stream 1: retrieves recent revenue metrics, identifies unusual spikes in churn MRR, and creates incidents when needed.

const rows = items.map(item => item.json);

if (rows.length < 8) {

return [];

}

rows.sort((a, b) => new Date(a.date) - new Date(b.date));

const values = rows.map(r => parseFloat(r.churn_mrr || 0));

const lastIndex = rows.length - 1;

const lastRow = rows[lastIndex];

const lastValue = values[lastIndex];

const window = 7;

const baselineValues = values.slice(lastIndex - window, lastIndex);

const mean = baselineValues.reduce((s, v) => s + v, 0) / baselineValues.length;

const variance = baselineValues

.map(v => Math.pow(v - mean, 2))

.reduce((s, v) => s + v, 0) / baselineValues.length;

const std = Math.sqrt(variance);

if (std === 0) {

return [];

}

const z = (lastValue - mean) / std;

const deltaPct = mean === 0 ? null : ((lastValue - mean) / mean) * 100;

if (z > 2) {

const anomaly = {

date: lastRow.date,

metric_name: 'churn_mrr',

baseline_value: mean,

actual_value: lastValue,

z_score: z,

delta_pct: deltaPct,

severity:

deltaPct !== null && deltaPct > 50 ? 'high'

: deltaPct !== null && deltaPct > 25 ? 'medium'

: 'low',

};

return [{ json: anomaly }];

}

return [];- Stream 2: Monitors feature usage metrics to detect sudden drops in adoption or engagement.

Incidents are logged with severity, context, and alerts to the product team.

- Stream 3: For every open incident, collects additional context from the database (e.g., churn by country or plan), uses AI to generate a clear root cause hypothesis and suggested next steps, sends a summarized report to Slack and email and updates the incident

- Stream 4: Every morning, the workflow compiles all incidents from the previous day, creates a Notion page for documentation and sends a report to the leadership team

We deployed similar detection nodes for 8 different metrics, adjusting the z-score direction based on whether increases or decreases were problematic.

The AI agent receives additional context through SQL queries (churn by country, by plan, by segment) to generate more accurate root cause hypotheses. And all of this data is gathered and sent in a daily email.

The workflow generates daily summary reports aggregating all incidents by metric and severity, distributed via email and Slack to stakeholders.

The dashboard

The dashboard is consolidating all signals into one place. An automatic product health score with a 0-100 base is calculated with:

- incident volume

- severity weighting

- open vs resolved status

- number of users impacted

- business trends (MRR)

- usage trends (active accounts)

A segment breakdown to identify which customer groups are the most affected:

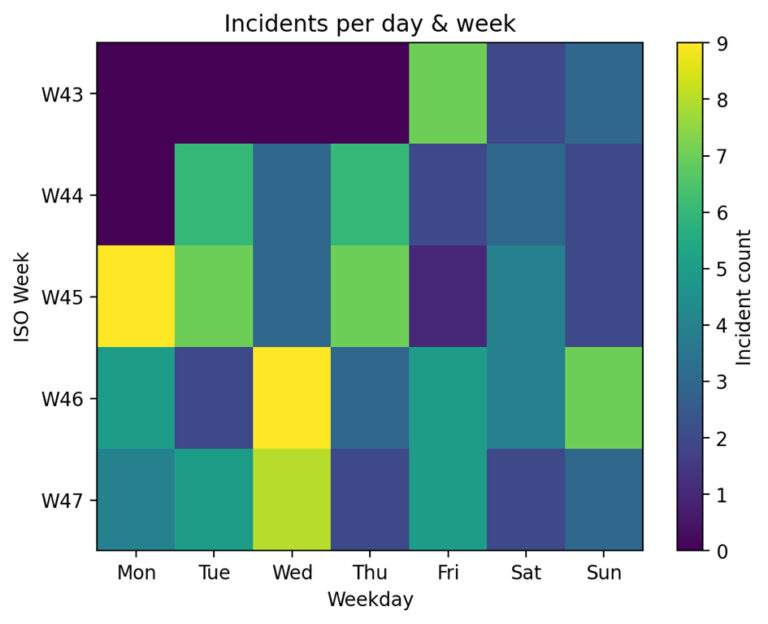

A weekly heatmap and time series trend charts to identify recurring patterns:

And a detailed incident view composed by:

- Business context

- Dimension & segment

- Root cause hypothesis

- Incident type

- An AI summary to accelerate communication and diagnoses coming from the n8n workflow

Diagnosis:

The product health score noted the actual product 24/100 with the status “at risk” with:

- 45 High & Critical incidents

- 36 incidents during the last 7 days

- 33,385 estimated affected usersNegative trend in churn and DAU

- Several spikes in api_error_rate and drops in checkout_success_rate

Biggest impact per segments:

- Enterprise → critical data freshness issues

- Mid-Market → recurring incidents on feature adoption

- SMB → fluctuations in onboarding & activation

Impact

The goal of this dashboard is not only to analyze incidents and identify patterns but to enable the organization to react faster with a detailed overview.

We noticed a 35% reduction in critical incidents after 2 months. SRE & DATA teams identified the recurring root cause of some major issues, thanks to the unified data, and were able to fix it and monitor the maintenance. Incident response time improved dramatically due to the AI summaries and all the metrics, allowing them to know where to investigate.

An AI-Powered Root Cause Analysis

Using AI can save a lot of time. Especially when an investigation is needed in different databases, and you don’t know where to start. Adding an AI agent in the loop can save you a considerable amount of time thanks to its speed of processing data. To obtain this, a detailed prompt is necessary because the agent will replace a human. So, to have the most accurate results, even the AI needs to understand the context and receive some guidance. Otherwise, it could investigate and draw irrelevant conclusions. Don’t forget to make sure you have a full understanding of the cause of the issue.

You are a Product Data & Revenue Analyst.

We detected an incident:

{{ $json.incident }}

Here is churn MRR by country (top offenders first):

{{ $json.churn_by_country }}

Here is churn MRR by plan:

{{ $json.churn_by_plan }}

1. Summarize what happened in simple business language.

2. Identify the most impacted segments (country, plan).

3. Propose 3-5 plausible hypotheses (product issues, price changes, bugs, market events).

4. Propose 3 concrete next steps for the Product team.It is essential to note that once the results are obtained, a final check is necessary to ensure the analysis was correctly done. AI is a tool, but it can also go wrong, so do not only on it; it’s a helpful tool. For this system, the AI will suggest the top 3 likely root causes for each incident.

A better alignment with the leadership team and reporting based on the data. Everything became more data-driven with deeper analyses, not intuition or reports by segmentation. This also led to an improved process.

Conclusion & takeaways

In conclusion, building a product health dashboard has several benefits:

- Detect negative trends (MRR, DAU, engagement) earlier

- Reduce critical incidents by identifying root-cause patterns

- Understand real business impact (users affected, churn risk)

- Prioritize the product roadmap based on risk and impact

- Align Product, Data, SRE, and Revenue around a single source of truth

That’s exactly what many companies lack: a unified data approach.

Using the n8n workflow helped in two ways: being able to resolve the issues as soon as possible and gather the data in one place. The automation tool helped reduce the time spent on this task as the business was still running.

Lessons for Product teams

- Start simple: building an automation system and a dashboard needs to be clearly defined. You are not building a product for the customers, you are building a product for your collaborators. It is essential that you understand each team’s needs since they are your core users. With that in mind, have the product that will be your MVP and answer to all your needs first. Then you can improve it by adding features or metrics.

- Unified metrics matter more than perfect detection: we have to keep in mind that it will be thanks to them that the time will be saved, along with understanding. Having good detection is essential, but if the metrics are inaccurate, the time saved will be wasted by the teams looking for the metrics scattered across different environments

- Automation saves 10 hours per week of manual investigation: by automating some manual and recurring tasks, you will save hours investigating, as with the incident alert workflow, we know directly where to investigate first and the hypothesis of the cause and even some action to take.

- Document everything: a proper and detailed documentation is a must and will allow all the parties involved to have a clear understanding and views about what is happening. Documentation is also a piece of data.

Who am I ?

I’m Yassin, a Project Manager who expanded into Data Science to bridge the gap between business decisions and technical systems. Learning Python, SQL, and analytics has enabled me to design product insights and automation workflows that connect what teams need with how data behaves. Let’s connect on Linkedin