Yesterday, we worked with Isolation Forest, which is an Anomaly Detection method.

Today, we look at another algorithm that has the same objective. But unlike Isolation Forest, it does not build trees.

It is called LOF, or Local Outlier Factor.

People often summarize LOF with one sentence: Does this point live in a region with a lower density than its neighbors?

This sentence is actually tricky to understand. I struggled with it for a long time.

However, there is one part that is immediately easy to understand,

and we will see that it becomes the key point:

there is a notion of neighbors.

And as soon as we talk about neighbors,

we naturally go back to distance-based models.

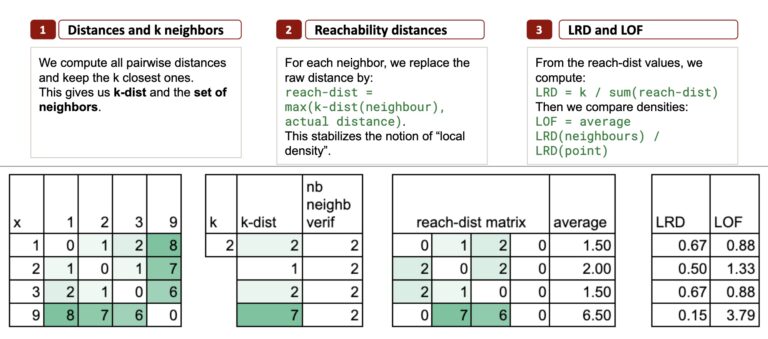

We will explain this algorithm in 3 steps.

To keep things very simple, we will use this dataset, again:

1, 2, 3, 9

Do you remember that I have the copyright on this dataset? We did Isolation Forest with it, and we will do LOF with it again. And we can also compare the two results.

All the Excel files are available through this Kofi link. Your support means a lot to me. The price will increase during the month, so early supporters get the best value.

Step 1 – k Neighbors and k-distance

LOF begins with something extremely simple:

Look at the distances between points.

Then find the k nearest neighbors of each point.

Let us take k = 2, just to keep things minimal.

Nearest neighbors for each point

- Point 1 → neighbors: 2 and 3

- Point 2 → neighbors: 1 and 3

- Point 3 → neighbors: 2 and 1

- Point 9 → neighbors: 3 and 2

Already, we see a clear structure emerging:

- 1, 2, and 3 form a tight cluster

- 9 lives alone, far from the others

The k-distance: a local radius

The k-distance is simply the largest distance among the k nearest neighbors.

And this is actually the key point.

Because this single number tells you something very concrete:

the local radius around the point.

If k-distance is small, the point is in a dense area.

If k-distance is large, the point is in a sparse area.

With just this one measure, you already have a first signal of “isolation”.

Here, we use the idea of “k nearest neighbors”, which of course reminds us of k-NN (the classifier or regressor).

The context here is different, but the calculation is exactly the same.

And if you think of k-means, do not mix them:

the “k” in k-means has nothing to do with the “k” here.

The k-distance calculation

For point 1, the two nearest neighbors are 2 and 3 (distances 1 and 2), so k-distance(1) = 2.

For point 2, neighbors are 1 and 3 (both at distance 1), so k-distance(2) = 1.

For point 3, the two nearest neighbors are 1 and 2 (distances 2 and 1), so k-distance(3) = 2.

For point 9, neighbors are 3 and 2 (6 and 7), so k-distance(9) = 7. This is huge compared to all the others.

In Excel, we can do a pairwise distance matrix to get the k-distance for each point.

Step 2 – Reachability Distances

For this step, I will just define the calculations here, and apply the formulas in Excel. Because, to be honest, I never succeeded in finding a truly intuitive way to explain the results.

So, what is “reachability distance”?

For a point p and a neighbor o, we define this reachability distance as:

reach-dist(p, o) = max(k-dist(o), distance(p, o))

Why take the maximum?

The purpose of reachability distance is to stabilize density comparison.

If the neighbor o lives in a very dense region (small k-dist), then we do not want to allow an unrealistically small distance.

In particular, for point 2:

- Distance to 1 = 1, but k-distance(1) = 2 → reach-dist(2, 1) = 2

- Distance to 3 = 1, but k-distance(3) = 2 → reach-dist(2, 3) = 2

Both neighbors force the reachability distance upward.

In Excel, we will keep a matrix format to display the reachability distances: one point compared to all the others.

Average reachability distance

For each point, we can now compute the average value, which tells us: on average, how far do I need to travel to reach my local neighborhood?

And now, do you notice something: the point 2 has a larger average reachability distance than 1 and 3.

This is not that intuitive to me!

Step 3 – LRD and the LOF Score

The final step is kind of a “normalization” to find an anomaly score.

First, we define the LRD, Local Reachability Density, which is simply the inverse of the average reachability distance.

And the final LOF score is calculated as:

So, LOF compares the density of a point to the density of its neighbors.

Interpretation:

- If LRD(p) ≈ LRD (neighbors), then LOF ≈ 1

- If LRD(p) is much smaller, then LOF >> 1. So p is in a sparse region

- If LRD(p) is much larger → LOF < 1. So p is in a very dense pocket.

I also did a version with more developments, and shorter formulas.

Understanding What “Anomaly” Means in Unsupervised Models

In unsupervised learning, there is no ground truth. And this is exactly where things can become tricky.

We do not have labels.

We do not have the “correct answer”.

We only have the structure of the data.

Take this tiny sample:

1, 2, 3, 7, 8, 12

(I also have the copyright on it.)

If you look at it intuitively, which one feels like an anomaly?

Personally, I would say 12.

Now let us look at the results. LOF says the outlier is 7.

(And you can notice that with k-distance, we would say that it is 12.)

Now, we can compare Isolation Forest and LOF side by side.

On the left, with the dataset 1, 2, 3, 9, both methods agree:

9 is the clear outlier.

Isolation Forest gives it the lowest score,

and LOF gives it the highest LOF value.

If we look closer, for Isolation Forest: 1, 2 and 3 have no differences in score. And LOF gives a higher score for 2. This is what we already noticed.

With the dataset 1, 2, 3, 7, 8, 12, the story changes.

- Isolation Forest points to 12 as the most isolated point.

This matches the intuition: 12 is far from everyone. - LOF, however, highlights 7 instead.

So who is right?

It is difficult to say.

In practice, we first need to agree with business teams on what “anomaly” actually means in the context of our data.

Because in unsupervised learning, there is no single truth.

There is only the definition of “anomaly” that each algorithm uses.

This is why it is extremely important to understand

how the algorithm works, and what kind of anomalies it is designed to detect.

Only then can you decide whether LOF, or k-distance, or Isolation Forest is the right choice for your specific situation.

And this is the whole message of unsupervised learning:

Different algorithms look at the data differently.

There is no “true” outlier.

Only the definition of what an outlier means for each model.

This is why understanding how the algorithm works

is more important than the final score it produces.

Conclusion

LOF and Isolation Forest both detect anomalies, but they look at the data through completely different lenses.

- k-distance captures how far a point must travel to find its neighbors.

- LOF compares local densities.

- Isolation Forest isolates points using random splits.

And even on very simple datasets, these methods can disagree.

One algorithm may flag a point as an outlier, while another highlights a completely different one.

And this is the key message:

In unsupervised learning, there is no “true” outlier.

Each algorithm defines anomalies according to its own logic.

This is why understanding how a method works is more important than the number it produces.

Only then can you choose the right algorithm for the right situation, and interpret the results with confidence.