In the previous article, we explored distance-based clustering with K-Means.

further: to improve how the distance can be measured we add variance, in order to get the Mahalanobis distance.

So, if k-Means is the unsupervised version of the Nearest Centroid classifier, then the natural question is:

What is the unsupervised version of QDA?

This means that like QDA, each cluster now has to be described not only by its mean, but also by its variance (and we also have to add covariance if the number of features is higher than 2). But here everything is learned without labels.

So you see the idea, right?

And well, the name of this model is the Gaussian Mixture Model (GMM)…

GMM and the names of these models…

As it is often the case, the names of the models come from historical reasons. They are not always designed to highlight the connections between models, if they are not found together.

Different researchers, different periods, different use cases… and we end up with names that sometimes hide the true structure behind the ideas.

Here, the name “Gaussian Mixture Model” simply means that the data is represented as a mixture of several Gaussian distributions.

If we follow the same naming logic as k-Means, it would have been clearer to call it something like k-Gaussian Mixture

Because, in practice, instead of only using the means, we add the variance. And we could just use the Mahalanobis distance, or another weighted distance using both means and variance. But Gaussian distribution gives us probabilities that are easier to interpret.

So we choose a number k of Gaussian components.

And by the way, GMM is not the only one.

In fact, the entire machine learning framework is actually much more recent than many of the models it contains. Most of these techniques were originally developed in statistics, signal processing, econometrics, or pattern recognition.

Then, much later, the field we now call “machine learning” emerged and regrouped all these models under one umbrella. But the names did not change.

So today we use a mixture of vocabularies coming from different eras, different communities, and different intentions.

This is why the relationships between models are not always obvious when you look only at the names.

If we had to rename everything with a modern, unified machine-learning style, the landscape would actually be much clearer:

- GMM would become k-Gaussian Clustering

- QDA would become Nearest Gaussian Classifier

- LDA, well, Nearest Gaussian Classifier with the same variance across classes.

And suddenly, all the links appear:

- k-Means ↔ Nearest Centroid

- GMM ↔ Nearest Gaussian (QDA)

This is why GMM is so natural after K-Means. If K-Means groups points by their closest centroid, then GMM groups them by their closest Gaussian shape.

Why this entire section to discuss the names?

Well, the truth is that, since we already covered the k-means algorithm, and we already did the transition from Nearest Centroids Classifier to QDA, we already know all about this algorithm, and the training algorithm will not change…

And what is the NAME of this training algorithm?

Oh, Lloyd’s algorithm.

Actually, before k-means was called so, it was simply known as Lloyd’s algorithm, published by Stuart Lloyd in 1957. Only later, the machine learning community changed it to “k-means”.

And this algorithm manipulated only the means, so we need another name, right?

You see where this is going: the Expectation-Maximizing algorithm!

EM is simply the general form of Lloyd’s idea. Lloyd updates the means, EM updates everything: means, variances, weights, and probabilities.

So, you already know everything about GMM!

But since my article is called “GMM in Excel”, I cannot end my article here…

GMM in 1 Dimension

Let us start with this simple dataset, the same we used for k-means: 1, 2, 3, 11, 12, 13

Hmm, the two Gaussians will have the same variances. So think about playing with other numbers in Excel!

And we naturally want 2 clusters.

Here are the different steps.

Initialization

We start with guesses for means, variances, and weights.

Expectation step (E-step)

For each point, we compute how likely it is to belong to each Gaussian.

Maximization step (M-step)

Using these probabilities, we update the means, variances, and weights.

Iteration

We repeat E-step and M-step until the parameters stabilise.

Each step is extremely simple once the formulas are visible.

You will see that EM is nothing more than updating averages, variances, and probabilities.

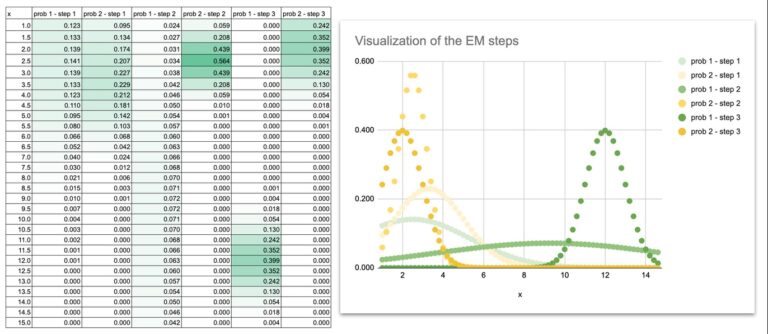

We can also do some visualization to see how the Gaussian curves move during the iterations.

At the beginning, the two Gaussian curves overlap heavily because the initial means and variances are just guesses.

The curves slowly separate, adjust their widths, and finally settle exactly on the two groups of points.

By plotting the Gaussian curves at each iteration, you can literally watch the model learn:

- the means slide toward the centers of the data

- the variances shrink to match the spread of each group

- the overlap disappears

- the final shapes match the structure of the dataset

This visual evolution is extremely helpful for intuition. Once you see the curves move, EM is no longer an abstract algorithm. It becomes a dynamic process you can follow step by step.

GMM in 2 Dimensions

The logic is exactly the same as in 1D. Nothing new conceptually. We simply extend the formulas…

Instead of having one feature per point, we now have two.

Each Gaussian must now learn:

- a mean for x1

- a mean for x2

- a variance for x1

- a variance for x2

- AND a covariance term between the two features.

Once you write the formulas in Excel, you will see that the process stays exactly the same:

Well, the truth is that if you look at the screenshot, you might think: “Wow, the formula is so long!” And this is not all of it.

But do not be fooled. The formula is long only because we write out the 2-dimensional Gaussian density explicitly:

- one part for the distance in x1

- one part for the distance in x2

- the covariance term

- the normalization constant

Nothing more.

It is simply the density formula expanded cell by cell.

Long to type, but perfectly understandable once you see the structure: a weighted distance, inside an exponential, divided by the determinant.

So yes, the formula looks big… but the idea behind it is extremely simple.

Conclusion

K-Means gives hard boundaries.

GMM gives probabilities.

Once the EM formulas are written in Excel, the model becomes simple to follow: the means move, the variances adjust, and the Gaussians naturally settle around the data.

GMM is just the next logical step after k-Means, offering a more flexible way to represent clusters and their shapes.