of my Machine Learning Advent Calendar.

Before closing this series, I would like to sincerely thank everyone who followed it, shared feedback, and supported it, in particular the Towards Data Science team.

Ending this calendar with Transformers is not a coincidence. The Transformer is not just a fancy name. It is the backbone of modern Large Language Models.

There is a lot to say about RNNs, LSTMs, and GRUs. They played a key historical role in sequence modeling. But today, modern LLMs are overwhelmingly based on Transformers.

The name Transformer itself marks a rupture. From a naming perspective, the authors could have chosen something like Attention Neural Networks, in line with Recurrent Neural Networks or Convolutional Neural Networks. As a Cartesian mind, I would have appreciated a more consistent naming structure. But naming aside, the conceptual shift introduced by Transformers fully justifies the distinction.

Transformers can be used in different ways. Encoder architectures are commonly used for classification. Decoder architectures are used for next-token prediction, so for text generation.

In this article, we will focus on one core idea only: how the attention matrix transforms input embeddings into something more meaningful.

In the previous article, we introduced 1D Convolutional Neural Networks for text. We saw that a CNN scans a sentence using small windows and reacts when it recognizes local patterns. This approach is already very powerful, but it has a clear limitation: a CNN only looks locally.

Today, we move one step further.

The Transformer answers a fundamentally different question.

What if every word could look at all the other words at once?

1. The same word in two different contexts

To understand why attention is needed, we will start with a simple idea.

We will use two different input sentences, both containing the word mouse, but used in different contexts.

In the first input, mouse appears in a sentence with cat. In the second input, mouse appears in a sentence with keyboard.

At the input level, we deliberately use the same embedding for the word “mouse” in both cases. This is important. At this stage, the model does not know which meaning is intended.

The embedding for mouse contains both:

- a strong animal component

- a strong tech component

This ambiguity is intentional. Without context, mouse could refer to an animal or to a computer device.

All other words provide clearer signals. Cat is strongly animal. Keyboard is strongly tech. Words like and or are mainly carry grammatical information. Words like friends and useful are weakly informative on their own.

At this point, nothing in the input embeddings allows the model to decide which meaning of mouse is correct.

In the next chapter, we will see how the attention matrix performs this transformation, step by step.

2. Self-attention: how context is injected into embeddings

2.1 Self-attention, not just attention

We first clarify what kind of attention we are using here. This chapter focuses on self-attention.

Self-attention means that each word looks at the other words of the same input sequence.

In this simplified example, we make an additional pedagogical choice. We assume that Queries and Keys are directly equal to the input embeddings. In other words, there are no learned weight matrices for Q and K in this chapter.

This is a deliberate simplification. It allows us to focus entirely on the attention mechanism, without introducing extra parameters. Similarity between words is computed directly from their embeddings.

Conceptually, this means:

Q = Input

K = Input

Only the Value vectors are used later to propagate information to the output.

In real Transformer models, Q, K, and V are all obtained through learned linear projections. Those projections add flexibility, but they do not change the logic of attention itself. The simplified version shown here captures the core idea.

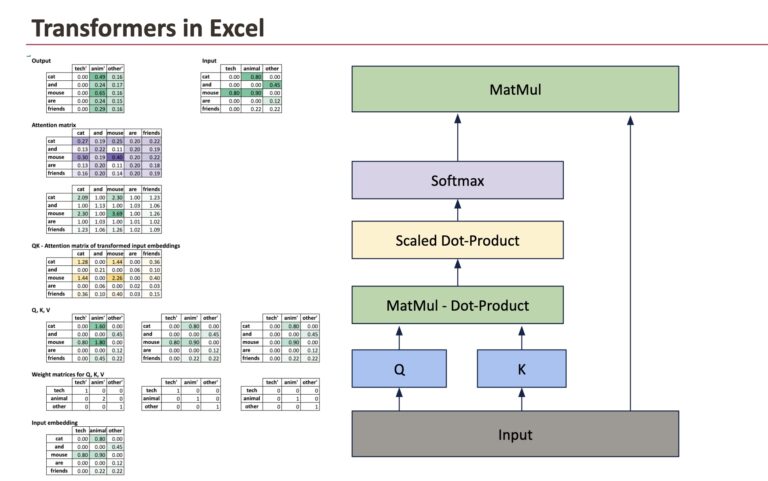

Here is the whole picture that we will decompose.

2.2 From input embeddings to raw attention scores

We start from the input embedding matrix, where each row corresponds to a word and each column corresponds to a semantic dimension.

The first operation is to compare every word with every other word. This is done by computing dot products between Queries and Keys.

Because Queries and Keys are equal to the input embeddings in this example, this step reduces to computing dot products between input vectors.

All dot products are computed at once using a matrix multiplication:

Scores = Input × Inputᵀ

Each cell of this matrix answers a simple question: how similar are these two words, given their embeddings?

At this stage, the values are raw scores. They are not probabilities, and they do not yet have a direct interpretation as weights.

2.3 Scaling and normalization

Raw dot products can grow large as the embedding dimension increases. To keep values in a stable range, the scores are scaled by the square root of the embedding dimension.

ScaledScores = Scores / √d

This scaling step is not conceptually deep, but it is practically important. It prevents the next step, the softmax, from becoming too sharp.

Once scaled, a softmax is applied row by row. This converts raw scores into positive values that sum to one.

The result is the attention matrix.

Each row of this matrix describes how much attention a given word pays to every other word in the sentence.

2.4 Interpreting the attention matrix

The attention matrix is the central object of self-attention.

For a given word, its row in the attention matrix answers the question: when updating this word, which other words matter, and how much?

For example, the row corresponding to mouse assigns higher weights to words that are semantically related in the current context. In the sentence with cat and friends, mouse attends more to animal-related words. In the sentence with keyboard and useful, it attends more to technical words.

The mechanism is identical in both cases. Only the surrounding words change the outcome.

2.5 From attention weights to output embeddings

The attention matrix itself is not the final result. It is a set of weights.

To produce the output embeddings, we combine these weights with the Value vectors.

Output = Attention × V

In this simplified example, the Value vectors are taken directly from the input embeddings. Each output word vector is therefore a weighted average of the input vectors, with weights given by the corresponding row of the attention matrix.

For a word like mouse, this means that its final representation becomes a mixture of:

- its own embedding

- the embeddings of the words it attends to most

This is the precise moment where context is injected into the representation.

At the end of self-attention, the embeddings are no longer ambiguous.

The word mouse no longer has the same representation in both sentences. Its output vector reflects its context. In one case, it behaves like an animal. In the other, it behaves like a technical object.

Nothing in the embedding table changed. What changed is how information was combined across words.

This is the core idea of self-attention, and the foundation on which Transformer models are built.

If we now compare the two examples, cat and mouse on the left and keyboard and mouse on the right, the effect of self-attention becomes explicit.

In both cases, the input embedding of mouse is identical. Yet the final representation differs. In the sentence with cat, the output embedding of mouse is dominated by the animal dimension. In the sentence with keyboard, the technical dimension becomes more prominent. Nothing in the embedding table changed. The difference comes entirely from how attention redistributed weights across words before mixing the values.

This comparison highlights the role of self-attention: it does not change words in isolation, but reshapes their representations by taking the full context into account.

3. Learning how to mix information

3.1 Introducing learned weights for Q, K, and V

Until now, we have focused on the mechanics of self-attention itself. We now introduce an important element: learned weights.

In a real Transformer, Queries, Keys, and Values are not taken directly from the input embeddings. Instead, they are produced by learned linear transformations.

For each word embedding, the model computes:

Q = Input × W_Q

K = Input × W_K

V = Input × W_V

These weight matrices are learned during training.

At this stage, we usually keep the same dimensionality. The input embeddings, Q, K, V, and the output embeddings all have the same number of dimensions. This makes the role of attention easier to understand: it modifies representations without changing the space they live in.

Conceptually, these weights allow the model to decide:

- which aspects of a word matter for comparison (Q and K)

- which aspects of a word should be transmitted to others (V)

3.2 What the model actually learns

The attention mechanism itself is fixed. Dot products, scaling, softmax, and matrix multiplications always work the same way. What the model actually learns are the projections.

By adjusting the Q and K weights, the model learns how to measure relationships between words for a given task. By adjusting the V weights, it learns what information should be propagated when attention is high. The structure defines how information flows, while the weights define what information flows.

Because the attention matrix depends on Q and K, it is partially interpretable. We can inspect which words attend to which others and observe patterns that often align with syntax or semantics.

This becomes clear when comparing the same word in two different contexts. In both examples, the word mouse starts with exactly the same input embedding, containing both an animal and a tech component. On its own, it is ambiguous.

What changes is not the word, but the attention it receives. In the sentence with cat and friends, attention emphasizes animal-related words. In the sentence with keyboard and useful, attention shifts toward technical words. The mechanism and the weights are identical in both cases, yet the output embeddings differ. The difference comes entirely from how the learned projections interact with the surrounding context.

This is precisely why the attention matrix is interpretable: it reveals which relationships the model has learned to consider meaningful for the task.

3.3 Changing the dimensionality on purpose

Nothing, however, forces Q, K, and V to have the same dimensionality as the input.

The Value projection, in particular, can map embeddings into a space of a different size. When this happens, the output embeddings inherit the dimensionality of the Value vectors.

This is not a theoretical curiosity. It is exactly what happens in real models, especially in multi-head attention. Each head operates in its own subspace, often with a smaller dimension, and the results are later concatenated into a larger representation.

So attention can do two things:

- mix information across words

- reshape the space in which this information lives

This explains why Transformers scale so well.

They do not rely on fixed features. They learn:

- how to compare words

- how to route information

- how to project meaning into different spaces

The attention matrix controls where information flows.

The learned projections control what information flows and how it is represented.

Together, they form the core mechanism behind modern language models.

Conclusion

This Advent Calendar was built around a simple idea: understanding machine learning models by looking at how they actually transform data.

Transformers are a fitting way to close this journey. They do not rely on fixed rules or local patterns, but on learned relationships between all elements of a sequence. Through attention, they turn static embeddings into contextual representations, which is the foundation of modern language models.

Thank you again to everyone who followed this series, shared feedback, and supported it, especially the Towards Data Science team.

Merry Christmas 🎄