use gradient descent to find the optimal values of their weights. Linear regression, logistic regression, neural networks, and large language models all rely on this principle. In the previous articles, we used simple gradient descent because it is easier to show and easier to understand.

The same principle also appears at scale in modern large language models, where training requires adjusting millions or billions of parameters.

However, real training rarely uses the basic version. It is often too slow or too unstable. Modern systems use variants of gradient descent that improve speed, stability, or convergence.

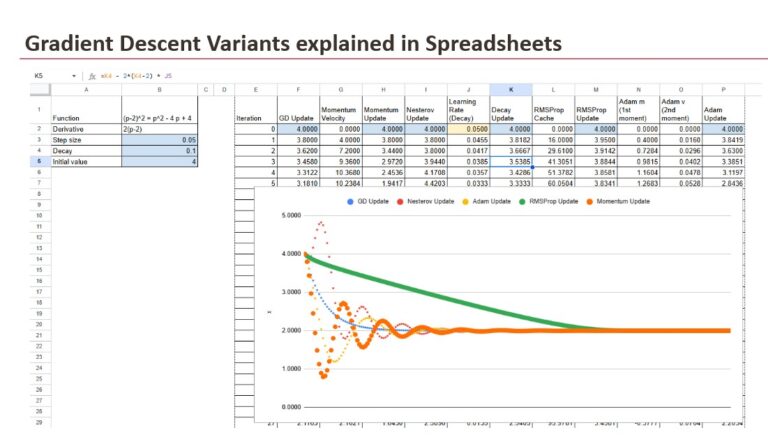

In this bonus article, we focus on these variants. We look at why they exist, what problem they solve, and how they change the update rule. We do not use a dataset here. We use one variable and one function, only to make the behavior visible. The goal is to show the movement, not to train a model.

1. Gradient Descent and the Update Mechanism

1.1 Problem setup

To make these ideas visible, we will not use a dataset here, because datasets introduce noise and make it harder to observe the behavior directly. Instead, we will use a single function:

f(x) = (x – 2)²

We start at x = 4, and the gradient is:

gradient = 2*(x – 2)

This simple setup removes distractions. The objective is not to train a model, but to understand how the different optimisation rules change the movement toward the minimum.

1.2 The structure behind every update

Every optimisation method that follows in this article is built on the same loop, even when the internal logic becomes more sophisticated.

- First, we read the current value of x.

- Then, we compute the gradient with the expression 2*(x – 2).

- Finally, we update x according to the specific rule defined by the chosen variant.

The destination remains the same and the gradient always points in the correct direction, but the way we move along this direction changes from one method to another. This change in movement is the essence of each variant.

1.3 Basic gradient descent as the baseline

Basic gradient descent applies a direct update based on the current gradient and a fixed learning rate:

x = x – lr * 2*(x – 2)

This is the most intuitive form of learning because the update rule is easy to understand and easy to implement. The method moves steadily toward the minimum, but it often does so slowly, and it can struggle when the learning rate is not chosen carefully. It represents the foundation on which all other variants are built.

2. Learning Rate Decay

Learning Rate Decay does not change the update rule itself. It changes the size of the learning rate across iterations so that the optimisation becomes more stable near the minimum. Large steps help when x is far from the target, but smaller steps are safer when x gets close to the minimum. Decay reduces the risk of overshooting and produces a smoother landing.

There is not a single decay formula. Several schedules exist in practice:

- exponential decay

- inverse decay (the one shown in the spreadsheet)

- step-based decay

- linear decay

- cosine or cyclical schedules

All of these follow the same idea: the learning rate becomes smaller over time, but the pattern depends on the chosen schedule.

In the spreadsheet example, the decay formula is the inverse form:

lr_t = lr / (1 + decay * iteration)

With the update rule:

x = x – lr_t * 2*(x – 2)

This schedule starts with the full learning rate at the first iteration, then gradually reduces it. At the beginning of the optimisation, the step size is large enough to move quickly. As x approaches the minimum, the learning rate shrinks, stabilising the update and avoiding oscillation.

On the chart, both curves start at x = 4. The fixed learning rate version moves faster at first but approaches the minimum with less stability. The decay version moves more slowly but remains controlled. This confirms that decay does not change the direction of the update. It only changes the step size, and that change affects the behavior.

3. Momentum Methods

Gradient Descent moves in the correct direction but can be slow on flat regions. Momentum methods address this by adding inertia to the update.

They accumulate direction over time, which creates faster progress when the gradient remains consistent. This family includes standard Momentum, which builds speed, and Nesterov Momentum, which anticipates the next position to reduce overshooting.

3.1 Standard momentum

Standard momentum introduces the idea of inertia into the learning process. Instead of reacting only to the current gradient, the update keeps a memory of previous gradients in the form of a velocity variable:

velocity = 0.9velocity + 2(x – 2)

x = x – lr * velocity

This approach accelerates learning when the gradient remains consistent for multiple iterations, which is especially useful in flat or shallow regions.

However, the same inertia that generates speed can also lead to overshooting the minimum, which creates oscillations around the target.

3.2 Nesterov Momentum

Nesterov Momentum is a refinement of the previous method. Instead of updating the velocity at the current position alone, the method first estimates where the next position will be, and then evaluates the gradient at that anticipated location:

velocity = 0.9velocity + 2((x – 0.9*velocity) – 2)

x = x – lr * velocity

This look-ahead behaviour reduces the overshooting effect that can appear in regular Momentum, which leads to a smoother approach to the minimum and fewer oscillations. It keeps the benefit of speed while introducing a more careful sense of direction.

4. Adaptive Gradient Methods

Adaptive Gradient Methods adjust the update based on information gathered during training. Instead of using a fixed learning rate or relying only on the current gradient, these methods adapt to the scale and behavior of recent gradients.

The goal is to reduce the step size when gradients become unstable and to allow normal progress when the surface is more predictable. This approach is useful in deep networks or irregular loss surfaces, where the gradient can change in magnitude from one step to the next.

4.1 RMSProp (Root Mean Square Propagation)

RMSProp stands for Root Mean Square Propagation. It keeps a running average of squared gradients in a cache, and this value influences how aggressively the update is applied:

cache = 0.9cache + (2(x – 2))²

x = x – lr / sqrt(cache) * 2*(x – 2)

The cache becomes larger when gradients are unstable, which reduces the update size. When gradients are small, the cache grows more slowly, and the update remains close to the normal step. This makes RMSProp effective in situations where the gradient scale is not consistent, which is common in deep learning models.

4.2 Adam (Adaptive Moment Estimation)

Adam stands for Adaptive Moment Estimation. It combines the idea of Momentum with the adaptive behaviour of RMSProp. It keeps a moving average of gradients to capture direction, and a moving average of squared gradients to capture scale:

m = 0.9m + 0.1(2(x – 2)) v = 0.999v + 0.001(2(x – 2))²

x = x – lr * m / sqrt(v)

The variable m behaves like the velocity in momentum, and the variable v behaves like the cache in RMSProp. Adam updates both values at every iteration, which allows it to accelerate when progress is clear and shrink the step when the gradient becomes unstable. This balance between speed and control is what makes Adam a standard choice in neural network training.

4.3 Other Adaptive Methods

Adam and RMSProp are the most common adaptive methods, but they are not the only ones. Several related methods exist, each with a specific objective:

- AdaGrad adjusts the learning rate based on the full history of squared gradients, but the rate can shrink too quickly.

- AdaDelta modifies AdaGrad by limiting how much the historical gradient affects the update.

- Adamax uses the infinity norm and can be more stable for very large gradients.

- Nadam adds Nesterov-style look-ahead behaviour to Adam.

- RAdam attempts to stabilise Adam in the early phase of training.

- AdamW separates weight decay from the gradient update and is recommended in many modern frameworks.

These methods follow the same idea as RMSProp and Adam: adapting the update to the behavior of the gradients. They represent refinements or extensions of the concepts introduced above, and they are part of the same broader family of adaptive optimisation algorithms.

Conclusion

All methods in this article aim for the same goal: moving x toward the minimum. The difference is the path. Gradient Descent provides the basic rule. Momentum adds speed, and Nesterov improves control. RMSProp adapts the step to gradient scale. Adam combines these ideas, and Learning Rate Decay adjusts the step size over time.

Each method solves a specific limitation of the previous one. None of them replace the baseline. They extend it. In practice, optimisation is not one rule, but a set of mechanisms that work together.

The goal stays the same. The movement becomes more effective.