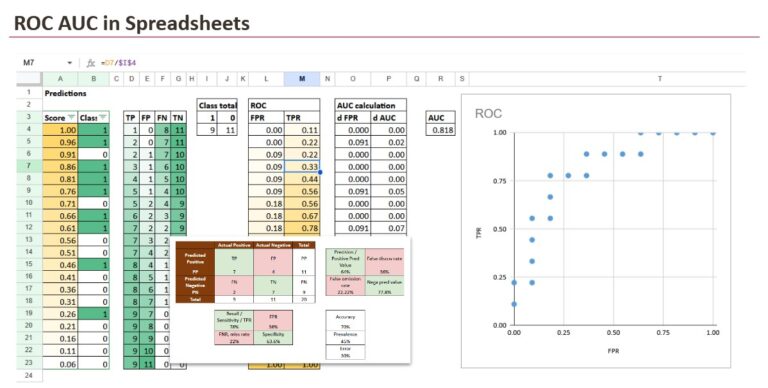

, we will implement AUC in Excel.

AUC is usually used for classification tasks as a performance metric.

But we start with a confusion matrix, because that is where everyone begins in practice. Then we will see why a single confusion matrix is not enough.

And we will also answer these questions:

- AUC means Area Under the Curve, but under which curve?

- Where does that curve come from?

- Why is the area meaningful?

- Is AUC a probability? (Yes, it has a probabilistic interpretation)

1. Why a confusion matrix is not enough

1.1 Scores from models

A classifier will usually give us scores, not final decisions. The decision comes later, when we choose a threshold.

If you read the previous “Advent Calendar” articles, you have already seen that “score” can mean different things depending on the model family:

- Distance-based models (such as k-NN) often compute the proportion of neighbors for a given class (or a distance-based confidence), which becomes a score.

- Density-based models compute a likelihood under each class, then normalize to get a final (posterior) probability.

- Classification Tree-based models often output the proportion of a given class among the training samples inside the leaf (that is why many points share the same score).

- Weight-based models (linear models, kernels, neural networks) compute a weighted sum or a non-linear score, and sometimes apply a calibration step (sigmoid, softmax, Platt scaling, etc.) to map it to a probability.

So no matter the approach, we end up with the same situation: a score per observation.

Then, in practice, we pick a threshold, often 0.5, and we convert scores into predicted classes.

And this is exactly where the confusion matrix enters the story.

1.2 The confusion matrix at one threshold

Once a threshold is chosen, every observation becomes a binary decision:

- predicted positive (1) or predicted negative (0)

From that, we can count four numbers:

- TP (True Positives): predicted 1 and actually 1

- TN (True Negatives): predicted 0 and actually 0

- FP (False Positives): predicted 1 but actually 0

- FN (False Negatives): predicted 0 but actually 1

This 2×2 counting table is the confusion matrix.

Then we typically compute ratios such as:

- Precision = TP / (TP + FP)

- Recall (TPR) = TP / (TP + FN)

- Specificity = TN / (TN + FP)

- FPR = FP / (FP + TN)

- Accuracy = (TP + TN) / Total

So far, everything is clean and intuitive.

But there is a hidden limitation: all these values depend on the threshold. So the confusion matrix evaluates the model at one operating point, not the model itself.

1.3 When one threshold breaks everything

This is a strange example, but it still makes the point very clearly.

Imagine that your threshold is set to 0.50, and all scores are below 0.50.

Then the classifier predicts:

- Predicted Positive: none

- Predicted Negative: everyone

So you get:

- TP = 0, FP = 0

- FN = 10, TN = 10

This is a perfectly valid confusion matrix. It also creates a very strange feeling:

- Precision becomes

#DIV/0!because there are no predicted positives. - Recall is 0% because you did not capture any positive.

- Accuracy is 50%, which sounds “not too bad”, even though the model found nothing.

Nothing is wrong with the confusion matrix. The issue is the question we asked it to answer.

A confusion matrix answers: “How good is the model at this specific threshold?”

If the threshold is poorly chosen, the confusion matrix can make a model look useless, even when the scores contain real separation.

And in your table, the separation is visible: positives often have scores around 0.49, negatives are more around 0.20 or 0.10. The model is not random. Your threshold is simply too strict.

That is why a single threshold is not enough.

What we need instead is a way to evaluate the model across thresholds, not at a single one.

2. ROC

First we have to build the curve, since AUC stands for Area Under a Curve, so we have to understand this curve.

2.1 What ROC means (and what it is)

Because the first question everyone should ask is: AUC under which curve?

The answer is:

AUC is the area under the ROC curve.

But this raises another question.

What is the ROC curve, and where does it come from?

ROC stands for Receiver Operating Characteristic. The name is historical (early signal detection), but the idea is modern and simple: it describes what happens when you change the decision threshold.

The ROC curve is a plot with:

- x-axis: FPR (False Positive Rate)

FPR = FP / (FP + TN) - y-axis: TPR (True Positive Rate), also called Recall or Sensitivity

TPR = TP / (TP + FN)

Each threshold gives one point (FPR, TPR). When you connect all points, you get the ROC curve.

At this stage, one detail matters: the ROC curve is not directly observed; it is constructed by sweeping the threshold over the score ordering.

2.2 Building the ROC curve from scores

For each score, we can use it as a threshold (and of course, we could also define customized thresholds).

For each threshold:

- we compute TP, FP, FN, TN from the confusion matrix

- then we calculate FPR and TPR

So the ROC curve is simply the collection of all these (FPR, TPR) pairs, ordered from strict thresholds to permissive thresholds.

This is exactly what we will implement in Excel.

At this point, it is important to notice something that feels almost too simple. When we build the ROC curve, the actual numeric values of the scores do not matter. What matters is the order.

If one model outputs scores between 0 and 1, another outputs scores between -12 and +5, and a third outputs only two distinct values, ROC works the same way. As long as higher scores tend to correspond to the positive class, the threshold sweep will create the same sequence of decisions.

That is why the first step in Excel is always the same: sort by score from highest to lowest. Once the rows are in the right order, the rest is just counting.

2.3 Reading the ROC curve

In the Excel sheet, the construction becomes very concrete.

You sort observations by Score, from highest to lowest. Then you walk down the list. At each row, you act as if the threshold is set to that score, meaning: everything above is predicted positive.

That lets Excel compute cumulative counts:

- how many positives you have accepted so far

- how many negatives you have accepted so far

From these cumulative counts and the dataset totals, we compute TPR and FPR.

Now every row is one ROC point.

Why the ROC curve looks like a staircase

- When the next accepted row is a positive, TP increases, so TPR increases while FPR stays flat.

- When the next accepted row is a negative, FP increases, so FPR increases while TPR stays flat.

That is why, with real finite data, the ROC curve is a staircase. Excel makes this visible.

2.4 Reference cases you should recognize

A few reference cases help you read the curve immediately:

- Perfect classification: the curve goes straight up (TPR reaches 1 while FPR stays 0), then goes right at the top.

- Random classifier: the curve stays close to the diagonal line from (0,0) to (1,1).

- Inverted ranking: the curve falls “below” the diagonal, and the AUC becomes smaller than 0.5. But in this case we have to change the scores with 1-score. In theory, we can consider this fictive case. In practice, this usually happens when scores are interpreted in the wrong direction or class labels are swapped.

These are not just theory. They are visual anchors. Once you have them, you can interpret any real ROC curve quickly.

3. ROC AUC

Now, with the curve, what can we do?

3.1 Computing the area

Once the ROC curve exists as a list of points (FPR, TPR), the AUC is pure geometry.

Between two consecutive points, the area added is the area of a trapezoid:

- width = change in FPR

- height = average TPR of the two points

In Excel, this becomes a “delta column” approach:

- compute dFPR between consecutive rows

- multiply by the average TPR

- sum everything

Different cases:

- perfect classification: AUC = 1

- random ranking: AUC ≈ 0.5

- inverted ranking: AUC < 0.5

So the AUC is literally the summary of the whole ROC staircase.

3.2. AUC as a probability

AUC is not about choosing a threshold.

It answers a much simpler question:

If I randomly pick one positive example and one negative example, what is the probability that the model assigns a higher score to the positive one?

That is all.

- AUC = 1.0 means perfect ranking (the positive always gets a higher score)

- AUC = 0.5 means random ranking (it is basically a coin flip)

- AUC < 0.5 means the ranking is inverted (negatives tend to get higher scores)

This interpretation is extremely useful, because it explains again this important point:

AUC only depends on score ordering, not on the absolute values.

This is why ROC AUC works even when the “scores” are not perfectly calibrated probabilities. They can be raw scores, margins, leaf proportions, or any monotonic confidence value. As long as higher means “more likely positive”, AUC can evaluate the ranking quality.

Conclusion

A confusion matrix evaluates a model at one threshold, but classifiers produce scores, not decisions.

ROC and AUC evaluate the model across all thresholds by focusing on ranking, not calibration.

In the end, AUC answers a simple question: how often does a positive example receive a higher score than a negative one?

Seen this way, ROC AUC is an intuitive metric, and a spreadsheet is enough to make every step explicit.