on LinkedIn a few days ago saying that a lot of the top engineers are now just using AI to code.

It reached thousands and got quite a few heated opinions. The space is clearly split on this, and the people against it mostly think of it as outsourcing an entire project to a system that can’t build reliable software.

I didn’t have time to respond to every comment, but I think there’s a fundamental misunderstanding about how you can use AI to build today. It may surprise you that a lot of it is still engineering, just on a different level than before.

So let’s walk through how this space has evolved, how to plan before using AI, why judgement and taste still matter, which AI coding tools are winning, and where the bottlenecks still are.

Because software engineering might be changing, but it doesn’t seem to be disappearing.

The space is moving fast

Before we get into how to actually build with these tools, it’s worth understanding how fast things have changed.

Cursor became the first real AI-assisted IDE breakout in 2024, even though it launched in 2023, but getting it to produce something good without leaving behind a trail of errors was not easy.

I struggled a lot even last summer using it.

Many of us also remember the Devin fiasco, the so-called “junior AI engineer” that couldn’t really finish anything on its own (though this was some time ago).

The last few months have been different and we’ve seen this in socials too.

Spotify publicly claimed its top developers haven’t written a single line of code manually since December. Anthropic’s own internal team reportedly has 80%+ of all deployed code written with AI assistance.

And Andrej Karpathy said that programming changed more in the last two months than it had in years.

Anthropic also found that Claude Opus 4.6 discovered 22 novel vulnerabilities in Firefox in two weeks, 14 of them high-severity, roughly a fifth of Mozilla’s entire 2025 high-severity fix count.

The people who use these tools daily already know they’re getting better. But “getting better” doesn’t mean the engineering work is gone.

You plan, AI codes

So if the tools are this capable, why can’t you just say what you want and have it built? Because the planning, the architecture, and the system thinking is still the hard part.

Think of AI as an assistant, not the architect. You are still the one directing the project, and you need to think it through before you start delegating how it should be built.

The better your overview of the different layers (i.e. frontend, backend, security, infrastructure) the easier it is to instruct it correctly.

If you don’t mention what you want, you usually don’t get it.

This could mean using one agent to research different approaches first: tech stack options, cost and performance tradeoffs, or why you’d pick one language or framework over another.

If you’re building authentication, go do research. Get a brief review of whichever tool you’re considering, whether that’s Cognito, Auth0, or something else, and check whether it actually supports what you need.

This does mean you have to learn some of it on your own.

If you’re storing user data, you might need a CRUD API for it. One agent can build it, document it properly, and then another agent can use that documentation inside another application.

This works much better if you already know how APIs should be structured, how cloud CDKs work, or how deployment pipelines fit together.

The less you specify upfront, the more painful it gets later when you’re trying to get the agent to do stuff saying things like “not like that” and “this doesn’t work like I thought it would.” (I’m guilty of being this lazy).

Now, you might look at this and think that still sounds like a lot of work.

And honestly, yes, it is still work. A lot of these parts can be outsourced, and that makes things significantly faster, but it is still engineering of some kind.

Boris Cherny, who works on Claude Code, talked about his approach: plan mode first, iterate until the plan is right, then auto-accept execution.

His insight that keeps getting quoted in the tech community is, “Once the plan is good, the code is good.”

So, you think. The AI agent builds.

Then maybe you evaluate it, redirect it, and test it too.

Perhaps we’ll eventually see better orchestrator agents that can help with system design, evaluation, and wireframing, and I’m sure people are already working on this.

But for now, this part still needs a human.

On judgement and taste

People talk about judgement a lot, and taste too, and how this just can’t be delegated to an AI agent. This is essentially about knowing what to ask, when to push back, what looks risky, and having the ability to tell if the outcome is actually any good.

Judgement is basically recognition you build from having been close to the work, and it usually comes with some kind of experience.

People who’ve worked close to software tend to know where things break. They know what to test, what assumptions to question, and can often tell when something is being built badly.

This is also why people say it’s ironic that a lot of the people against AI are software engineers. They have the most to gain from these tools precisely because they already have that judgement.

But I also think people from other spaces, whether that’s product development, technical design, or UX, have developed their own judgement that can transfer over into building with AI.

I do think people who have an affinity for system level thinking and who can think in failure modes have some kind of upper hand too.

So, you don’t need to have been a developer, but you do need to know what good looks like for the thing you’re trying to build.

But if everything is new, learn to ask a lot of questions.

If you’re building an application, ask an agent to do a preliminary audit of the security of the application, grade each area, give you a short explanation of what each does, and explain what kind of security breach could happen.

If I work in a new space, I make sure to ask several agents against each other so I’m not completely blind.

So, the point is to work with the agents rather than blindly outsourcing the entire thinking process to them.

If judgement is knowing what to question, what to prioritize, what is risky, and what is good enough, taste is more your quality bar. It’s sensing when the UX, architecture, or output quality feels off, even if the thing technically works.

But none of this is fixed. Judgement is something you build, not something you’re born with. Taste might be a bit more innate, but should get better with time too.

As I’m self-taught myself, I’m pretty optimistic that people can jump into this space from other areas and learn fast if they have the affinity for it.

They might also be motivated by other things that may come in handy.

Which AI-assisted tools are winning

I’ve now overloaded you on everything before getting to the actual AI tools themselves so let’s run through them and which one seems to be winning.

Cursor was released in 2023 and held the stage for a long time. Then OpenAI, Anthropic, and Google started pushing their own tools.

Look at the amount of mentions of Claude Code, Cursor, and Codex across tech communities for the past year below. This pretty much sums up how the narrative has shifted over the past year.

If you go to Google Trends and do some research it will show similar trends, though it doesn’t show that Cursor trend lowering in the middle of last summer.

The standout is obviously Claude Code. It went from a side project inside Anthropic to the single most discussed developer tool in under a year.

The volume of conversation around it dwarfs Cursor, Copilot, and Codex combined in the communities this one tracks.

It’s fascinating how these platforms that own the LLMs can just grab a space they want to succeed in, and pretty much crush their competitors (of course still subsidizing their own tool at a rate no third-party IDE can match).

But besides the subsidized token-economics of these tools, people shifted from writing code blocks and part of their codebase to just saying “I stopped opening my IDE.”

So these tools are now allowing us to go from assisted coding to delegated coding.

The fundamental difference people keep pointing to from the other tools (like Cursor) is Claude Code works on your codebase like a colleague you hand work to rather than inside your editor suggesting code.

People also keep discovering that Claude Code is useful for things that aren’t programming.

I have a friend that works on organizing his entire 15-person team company inside of VS Code with Claude Code. None of it is actually code and he just uses the IDE for organisation.

Now the rate limits are a constant thing, with Claude Code being the fastest you’ll run out of week by week. I usually run out by Thursday and have to wait until Monday.

This is why we have several subscriptions, like Codex as well.

Now maybe it’s a taste thing, but most people I talk to go to Claude Code for most of their work, with Codex being the sidekick.

Claude Code Skills

Let’s just briefly mention Skills too here along with Claude Code.

I think it was made for people to write internal instructions that were project based, where you encode the lessons into a skill file and hand it to Claude before it starts working.

These are markdown files (along with scripts, assets, data) that live in your project and should cover anything from how to structure APIs to what your deployment pipeline expects to how to handle edge cases in a particular framework.

But I have found it as a neat way to transfer knowledge. Say you’re a developer who needs to build a mobile application and you’ve never touched React Native.

If you can find a Skill with best practices built by someone who actually knows what they are doing, you’ll have an easier time to build that project. It’s like you’re borrowing someone else’s experience and injecting it into your workflow.

Same thing with frontend design, accessibility standards, system architecture, SEO, UX wire framing and so on.

Now I have tried to build some of these with AI (without being an expert in the domain) with more or less success.

Maybe this pattern will grow though where we’ll better be able to instruct the agents beforehand, maybe selling skills among each other, so we don’t have to learn so much, who knows.

Let’s cover bottlenecks too

I should cover the issues as well. This is not all rainbows and sunshine.

LLMs can be unreliable and cause real damage, we’re not in control of model drift, and then there’s the question of how judgement is built if we’re no longer coding.

The other day I was pulling my hair out because an integration wasn’t working. I’d asked Codex to document how to use an API from another application, then sent that documentation to Claude Code.

It took a few minutes to build the integration and then an hour for me to debug it, thinking it was something else entirely. But essentially Claude Code had made up the base URL for the endpoint which should have been the one thing I checked but didn’t.

I kept asking it where did you get this one from, and it said, “I can’t really say.”

You know the deal.

So it makes sense that it can get pretty bad when you give these agents real power. We’ve heard the stories by now.

In December, Amazon’s AI coding agent Kiro inherited an engineer’s elevated permissions, bypassed two-person approval, and deleted a live AWS production environment. This caused a 13-hour outage.

I know they made it mandatory now to approve AI generated code.

But I doubt manual review can be the main control layer if AI is writing this much code. So I wonder if the answer is better constraints, narrower blast radius, stronger testing, and better system level checks in some way.

It will be interesting to see what the future holds here.

There are more stories like this of course.

Such as, Claude Code wiped a developer’s production database via a Terraform command, nuking 2.5 years of records (though Claude did warn him before). OpenAI’s Codex wiped a user’s entire F: drive from a character-escaping bug.

There is also model drift that we just don’t have control of as users. This means that the tools can degrade, maybe because of new releases, cost cutting fixes, etc.

Having the model just not working like it used to one day is more than a bit of a nuisance.

This isn’t new, and people have built their own monitoring tools for it.

Marginlab.ai runs daily SWE-bench benchmarks against Claude Code specifically to track degradation. Chip Huyen open-sourced Sniffly for tracking usage patterns and error rates.

The fact that the community felt the need to build all of this tells you something. We’re relying on these tools for serious work, but we’re not in charge of how they perform.

Then there is the whole judgement thing.

Anthropic ran a controlled trial with 52 mostly junior software engineers and found that the group using AI scored 17% lower on comprehension tests, roughly two letter grades worse than the group that coded by hand.

When you outsource the code writing part, you start losing the intuition that comes from working close to the code, the question is how much of a problem this will be.

This list is not exhaustive, there is also the question of what these tools actually cost once the subsidies disappear.

Rounding Up

This conversation is neither about not needing software engineering experience nor about AI being useless.

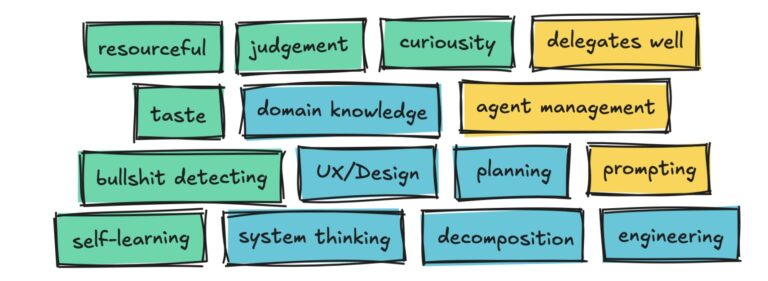

What I think is actually happening is that engineering in this space is shifting. System thinking, engineering experience, curiosity, breadth across domains, and analytical thinking will matter more than the ability to write the code by hand.

Maybe this means engineering is moving up a layer of abstraction, with AI shifting value away from hand coding and toward system judgment.

But I don’t think AI removes the need for engineering itself. Right now this is a new way to engineer software, one that is obviously much faster, but not without a lot of risks.

We’ve seen the progress exceed anything we’ve expected, so it’s hard to say how far this goes.

But for now, a human still has to drive the project, take responsibility, and decide what is good and what is not.

This is my first opinion piece, as I usually write about building in the AI engineering space.

But since we’ve been building software right now just using AI with Claude Code, it seemed fitting to write a bit on this subject.

This is still the basics of vibe engineering, I know people have gone further than me, so there will probably be another one in the future talking about how naive I was here and how things have changed since then.

Alas, that’s just the way it is and if you write you need to swallow your pride and just be okay with feeling stupid.

Connect with me on LinkedIn to write your thoughts, check out my other articles here, on Medium, or on my website.

❤