— that he saw further only by standing on the shoulders of giants — captures a timeless truth about science. Every breakthrough rests on countless layers of prior progress, until one day … it all just works. Nowhere is this more evident than in the recent and ongoing revolution in natural language processing (NLP), driven by the Transformers architecture that underpins most generative AI systems today.

“If I have seen further, it is by standing on the shoulders of Giants.”

— Isaac Newton, letter to Robert Hooke, February 5, 1675 (Old Style calendar; 1676 New Style)

In this article, I take on the role of an academic Sherlock Holmes, tracing the evolution of language modelling.

A language model is an AI system trained to predict and generate sequences of words based on patterns learned from large text datasets. It assigns probabilities to word sequences, enabling applications from speech recognition and machine translation to today’s generative AI systems.

Like all scientific revolutions, language modelling did not emerge overnight but builds on a rich heritage. In this article, I focus on a small slice of the vast literature in the field. Specifically, our journey will begin with a pivotal earlier technology — the Relevance-Based Language Models of Lavrenko and Croft — which marked a step change in the performance of Information Retrieval systems in the early 2000s and continues to leave its mark in TREC competitions. From there, the trail leads to 2017, when Google published the seminal Attention Is All You Need paper, unveiling the Transformers architecture that revolutionised sequence-to-sequence translation tasks.

The key link between the two approaches is, at its core, quite simple: the powerful idea of attention. Just as Lavrenko and Croft’s Relevance Modelling estimates which terms are most likely to co-occur with a query, the Transformer’s attention mechanism computes the similarity between a query and all tokens in a sequence, weighting each token’s contribution to the query’s contextual meaning.

In both cases the attention mechanism acts as a soft probabilistic weighting mechanism, giving both techniques their raw representational power.

Both models are generative frameworks over text, differing mainly in their scope: RM1 models short queries from documents, transformers model full sequences.

In the following sections, we will explore the background of Relevance Models and the Transformer architecture, highlighting their shared foundations and clarifying the parallels between them.

Relevance Modelling — Introducing Lavrenko’s RM1 Mixture Model

Let’s dive into the conceptual parallel between Lavrenko & Croft’s Relevance Modelling framework in Information Retrieval and the Transformer’s attention mechanism. Both emerged in different domains and eras, but they share the same intellectual DNA. We will walk through the background on Relevance Models, before outlining the key link to the subsequent Transformer architecture.

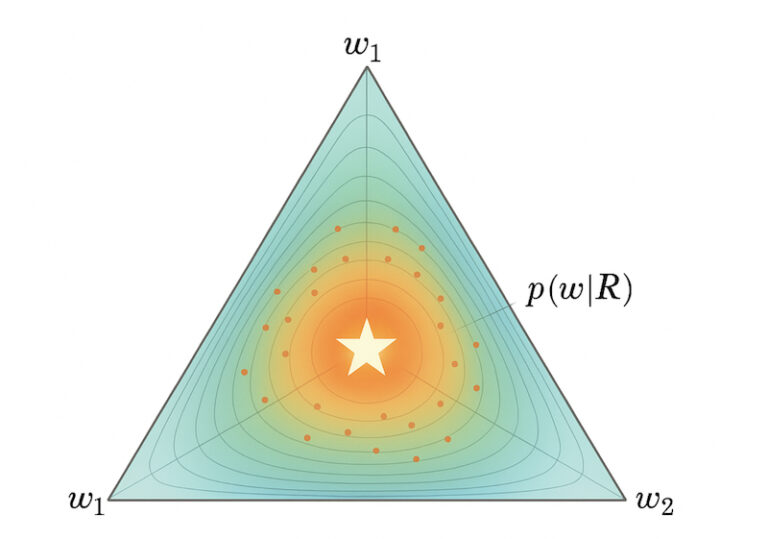

When Victor Lavrenko and W. Bruce Croft introduced the Relevance Model in the early 2000s, they offered an elegant probabilistic formulation for bridging the gap between queries and documents. At their core, these models start from a simple idea: assume there exists a hidden “relevance distribution” over vocabulary terms that characterises documents a user would consider relevant to their query. The task then becomes estimating this distribution from the observed data, namely the user query and the document collection.

The first Relevance Modelling variant — RM1 (there were two other models in the same family, not highlighted in detail here) — does this directly by inferring the distribution of words likely to occur in relevant documents given a query, essentially modelling relevance as a latent language model that sits“behind” both queries and documents.

with the posterior probability of a document d given a query q given by:

This is the classic unigram language model with Dirichlet smoothing proposed in the original paper by Lavrenko and Croft. To estimate this relevance model, RM1 uses the top-retrieved documents as pseudo-relevant feedback (PRF) — it assumes the highest-scoring documents are likely to be relevant. This means that no costly relevance judgements are required, a key advantage of Lavrenko’s formulation.

To build up an intuition into how the RM1 model works, we’ll code it up step-by-step in Python, using a simple toy document corpus consisting of three “documents”, defined below, with a query “cat”.

import math

from collections import Counter, defaultdict

# -----------------------

# Step 1: Example corpus

# -----------------------

docs = {

"d1": "the cat sat on the mat",

"d2": "the dog barked at the cat",

"d3": "dogs and cats are friends"

}

# Query

query = ["cat"]Next — for the purposes of this toy example IR situation— we lightly pre-process the document collection, by splitting the documents into tokens, determining the count of each token within each document, and defining the vocabulary:

# -----------------------

# Step 2: Preprocess

# -----------------------

# Tokenize and count

doc_tokens = {d: doc.split() for d, doc in docs.items()}

doc_lengths = {d: len(toks) for d, toks in doc_tokens.items()}

doc_term_counts = {d: Counter(toks) for d, toks in doc_tokens.items()}

# Vocabulary

vocab = set(w for toks in doc_tokens.values() for w in toks)If we run the above code we will get the following output, with four simple data structures holding the information we need to compute the RM1 distribution of relevance for any query.

doc_tokens = {

'd1': ['the', 'cat', 'sat', 'on', 'the', 'mat'],

'd2': ['the', 'dog', 'barked', 'at', 'the', 'cat'],

'd3': ['dogs', 'and', 'cats', 'are', 'friends']

}

doc_lengths = {

'd1': 6,

'd2': 6,

'd3': 5

}

doc_term_counts = {

'd1': Counter({'the': 2, 'cat': 1, 'sat': 1, 'on': 1, 'mat': 1}),

'd2': Counter({'the': 2, 'dog': 1, 'barked': 1, 'at': 1, 'cat': 1}),

'd3': Counter({'dogs': 1, 'and': 1, 'cats': 1, 'are': 1, 'friends': 1})

}

vocab = {

'the', 'cat', 'sat', 'on', 'mat',

'dog', 'barked', 'at',

'dogs', 'and', 'cats', 'are', 'friends'

}If we look at the RM1 equation defined earlier, we can break it up into key probabilistic components. P(w|d) defines the probability distribution of the words w in a document d. P(w|d) is usually computed using Dirichlet prior smoothing (Zhai & Lafferty, 2001). This prior avoids zero probabilities for unseen words and balances document-specific evidence with background collection statistics. This is defined as:

The above equation gives us a bag of words unigram model for each of the documents in our corpus. As an aside, you can imagine how these days — with powerful language models available of Hugging-face — we could swap out this formulation for e.g. a BERT-based variant, using embeddings to estimate the distribution P(w|d).

In a BERT-based approach to P(w|d), we can derive a document embedding g(d) via mean pooling and a word embedding e(w), then combine them in the following equation:

Here V denotes the pruned vocab (e.g., union of document terms) and 𝜏 is a temperature parameter. This could be the first step on creating a Neural Relevance Model (NRM), an untouched and potentially novel direction in the field of IR.

Back to the original formulation: this prior formulation can be coded up in Python, as our first estimate of P(w|d):

# -----------------------

# Step 3: P(w|d)

# -----------------------

def p_w_given_d(w, d, mu=2000):

"""Dirichlet-smoothed language model."""

tf = doc_term_counts[d][w]

doc_len = doc_lengths[d]

# collection probability

cf = sum(doc_term_counts[dd][w] for dd in docs)

collection_len = sum(doc_lengths.values())

p_wc = cf / collection_len

return (tf + mu * p_wc) / (doc_len + mu)Next up, we compute the query likelihood under the document model — P(q|d):

# -----------------------

# Step 4: P(q|d)

# -----------------------

def p_q_given_d(q, d):

"""Query likelihood under doc d."""

score = 0.0

for w in q:

score += math.log(p_w_given_d(w, d))

return math.exp(score) # return likelihood, not logRM1 requires P(d|q), so we flip the probability — P(q|d) — using Bayes rule:

def p_d_given_q(q):

"""Posterior distribution over documents given query q."""

# Compute query likelihoods for all documents

scores = {d: p_q_given_d(q, d) for d in docs}

# Assume uniform prior P(d), so proportionality is just scores

Z = sum(scores.values()) # normalization

return {d: scores[d] / Z for d in docs}We assume here that the document prior is uniform, and so it cancels. We also then normalize across all documents so the posteriors sum to 1:

Similar to P(w|d), it is worth thinking how we could neuralise the P(d|q) terms in RM1. A first approach would be to use an off-the-shelf cross- or dual-encoder model (such as the MS MARCO–fine-tuned BERT cross-encoder) to embed the query and document, produce a similarity score, and normalize it with a softmax:

With P(d|q) and P(w|d) converted to neural network-based representations, we can plug both together to get a simple initial version of a neural RM1 model that will give us back P(w|q).

For the purposes of this article — however — we will switch back into the classic RM1 formulation. Let’s run the (non-neural, standard RM1) code so far to see the output of the various components we’ve just discussed. Recall that our toy document corpus is:

d1: "the cat sat on the mat"

d2: "the dog barked at the cat"

d3: "dogs and cats are friends"Assuming Dirichlet smoothing (with μ=2000), the values will be very close to the collection probability of “cat” since the documents are very short. For illustration:

- d1: “cat” appears once in 6 words → P(q|d1) is approximately 0.16

- d2: “cat” appears once in 6 words → P(q|d2) is approximately 0.16

- d3: “cat” never appears → P(q|d3) is approximately 0 (with smoothing, a small >0 value)

We now normalize this distribution to arrive at the posterior distribution:

{

'P(d1|q)': 0.5002,

'P(d2|q)': 0.4997,

'P(d3|q)': 0.0001

}What is the key difference between P(d|q) and P(q|d)?

P(q|d) tells us how well the document “explains” the query. If we imagine that each document is itself a mini language model: if it were generating text, how likely is it to produce the words we see in the query? This probability is high if the query words look natural under the documents word distribution. For example, for query “cat”, a document that literally mentions “cat” will give a high likelihood; one about “dogs and cats” a bit less; one about “Charles Dickens” close to zero.

In contrast, the probability P(d|q) codifies how much we should trust the document given the query. This flips the perspective using Bayes rule: now we ask, given the query, what’s the probability the user’s relevant document is d?

So instead of evaluating how well the document explains the query, we treat documents as competing hypotheses for relevance and normalise them into a distribution over all documents. This gives us a ranking score turned into probability mass — the higher it is, the more likely this document is relevant compared to the rest of the collection.

We now have all components to finish our implementation of Lavrenko’s RM1 model:

# -----------------------

# Step 6: RM1: P(w|R,q)

# -----------------------

def rm1(q):

pdq = p_d_given_q(q)

pwRq = defaultdict(float)

for w in vocab:

for d in docs:

pwRq[w] += p_w_given_d(w, d) * pdq[d]

# normalize

Z = sum(pwRq.values())

for w in pwRq:

pwRq[w] /= Z

return dict(sorted(pwRq.items(), key=lambda x: -x[1]))

# -----------------------We can now see that RM1 defines a probability distribution over the vocabulary that tells us which words are most likely to occur in documents relevant to the query. This distribution can then be used for query expansion, by adding high-probability words, or for re-ranking documents by measuring the KL divergence between each document’s language model and the query’s relevance model.

Top terms from RM1 for query ['cat']

cat 0.1100

the 0.1050

dog 0.0800

sat 0.0750

mat 0.0750

barked 0.0700

on 0.0700

at 0.0680

dogs 0.0650

friends 0.0630In our toy example, the term “cat” naturally rises to the top, as it matches the query directly. High-frequency background words like “the” also appear strongly, though in practice these would be filtered out as stop words. More interestingly, content words from documents containing “cat” (such as sat, mat, dog, barked) are elevated as well. This is the power of RM1: it introduces related terms not present in the query itself, without requiring explicit relevance judgments or supervision. Words unique to d3 (e.g., friends, dogs, cats) receive small but nonzero probabilities thanks to smoothing.

RM1 defines a query-specific relevance model, a language model induced from the query, which is estimated by averaging over documents likely relevant to that query.

Having now seen how RM1 builds a query-specific language model by reweighing document terms according to their posterior relevance, it’s hard not to notice the parallel with what came much later in deep learning: the attention mechanism in Transformers.

In RM1, we estimate a new distribution P(w|R, q) over words by combining document language models, weighted by how likely each document is relevant given the query. The Transformer architecture does something rather similar: given a token (the “query”), it computes a similarity to all other tokens (the “keys”), then uses those scores to weight their “values.” This produces a new, context-sensitive representation of the query token.

Lavrenko’s RM1 Model as a “proto-Transformer”

The attention mechanism, introduced as part of the Transformer architecture, was designed to overcome a key weakness of earlier sequence models like LSTMs and RNNs: their short memory horizons. While recurrent models struggled to capture long-range dependencies, attention made it possible to directly connect any token in a sequence with any other, regardless of the distance in the sequence.

What’s interesting is that the mathematics of attention looks very similar to what RM1 was doing many years earlier. In RM1, as we’ve seen, we build a query-specific distribution by weighting documents; in Transformers, we build a token-specific representation by weighting other tokens in the sequence. The principle is the same — assign probability mass to the most relevant context — but applied at the token level rather than the document level.

If you strip Transformers down to their essence, the attention mechanism is really just RM1 applied at the token level.

This might be seen as a bold claim, so it’s incumbent upon us to provide some proof!

Let’s first dig a little deeper into the attention mechanism, and I defer to the fantastic wealth of high-quality existing introductory material for a fuller and deeper dive.

In the Transformer’s attention layer — known as scaled dot-product attention — given a query vector q, we compute its similarity to all other tokens’ keys k. These similarities are normalized into weights through a softmax. Finally, those weights are used to blend the corresponding values v, producing a new, context-aware representation of the query token.

Scaled dot-product attention is:

Here, Q = query vector(s), K = key vectors (documents, in our analogy, V = value vectors (words/features to be mixed). The softmax is a normalised distribution over the keys.

Now, recall RM1 (Lavrenko & Croft 2001):

The attention weights in scaled dot-product attention parallel the document–query distribution P(d|q) in RM1. Reformulating attention in per-query form makes this connection explicit:

The value vector — v — in attention can be thought of as corresponding to P(w|d) in the RM1 model, but instead of an explicit word distribution, v is a dense semantic vector — a low-rank surrogate for the full distribution. It’s effectively the content we mix together once we arrive at the relevance scores for each document.

Zooming out to the wider Transformer architecture, Multi-head attention can be seen as running multiple RM1-style relevance models in parallel with different projections.

We can additionally draw further parallels with the wider Transfomer architecture.

- Robust Probability Estimation: For example, we have previously discussed that RM1 needs smoothing (e.g., Dirichlet) to smooth zero counts and avoid overfitting to rare terms. Similarly, Transformers use residual connections and layer normalisation to stabilise and avoid collapsing attention distributions. Both models enforce robustness in probability estimation when the data signal is sparse or noisy.

- Pseudo Relevance Feedback: RM1 performs a single round of probabilistic expansion through pseudo-relevance feedback (PRF), restricting attention to the top-K retrieved documents. The PRF set functions like an attention context window: the query distributes probability mass over a limited set of documents, and words are reweighed accordingly. Similarly, transformer attention is limited to the local input sequence. Unlike RM1, however, transformers stack many layers of attention, each one reweighting and refining token distributions. Deep attention stacking can thus be seen as iterative pseudo-relevance feedback — repeatedly pooling across related context to build richer representations.

The analogy between RM1 and the Transformer is summarised in the below table, where we tie together each component and draw links between each:

RM1 expressed a powerful but general idea: relevance can be understood as weighting mixtures of content based on similarity to a query.

Nearly two decades later, the same principle re-emerged in the Transformer’s attention mechanism — now at the level of tokens rather than documents. What began as a statistical model for query expansion in Information Retrieval evolved into the mathematical core of modern Large Language Models (LLMs). It is a reminder that beautiful ideas in science rarely disappear; they travel forward through time, reshaped and reinterpreted in new contexts.

Through the written word, scientists carry ideas across generations — quietly binding together waves of innovation — until, suddenly, a breakthrough emerges.

Sometimes the simplest ideas are the most powerful. Who would have imagined that “attention” could become the key to unlocking language? And yet, it is.

Conclusions and Final Thoughts

In this article, we have traced one branch of the vast tree that is language modelling, uncovering a compelling connection between the development of relevance models in early information retrieval and the emergence of Transformers in modern NLP. RM1 — ther first variant in the family of relevance models, was, in many ways, a proto-Transformer for IR — foreshadowing the mechanism that would later reshape how machines understand language.

We even coded up a neural variant of the Relevance Model, using modern encoder-only models, thereby formally unifying past (relevance model) and present (transformer architecture) in the same formal probabilistic model!

At the beginning, we invoked Newton’s image of standing on the shoulders of giants. Let us close with another of his reflections:

“I do not know what I may appear to the world, but to myself I seem to have been only like a boy playing on the seashore, and diverting myself in now and then finding a smoother pebble or a prettier shell than ordinary, whilst the great ocean of truth lay all undiscovered before me.” Newton, Isaac. Quoted in David Brewster, Memoirs of the Life, Writings, and Discoveries of Sir Isaac Newton, Vol. 2 (1855), p. 407.

I hope that you agree that the path from RM1 to Transformers is just such a discovery — a highly polished pebble on the shore of a much greater ocean of AI discoveries yet to come.

Disclaimer: The views and opinions expressed in this article are my own and do not represent those of my employer or any affiliated organizations. The content is based on personal experience and reflection, and should not be taken as professional or academic advice.