As deep learning models grow larger and datasets expand, practitioners face an increasingly common bottleneck: GPU memory bandwidth. While cutting-edge hardware offers FP8 precision to accelerate training and inference, most data scientists and ML engineers work with older GPUs that lack this capability.

This gap in the ecosystem is what motivated me to build Feather, an open-source library that utilises a software-based approach to deliver FP8-like performance improvements on widely available hardware. I created this tool to make efficient deep learning more accessible to the broader ML community, and I welcome contributions

Notation & Abbreviations

- FPX: X-bit floating point number

- UX: X-bit unsigned integer

- GPU: Graphics processing unit

- SRAM: Static RAM (on-chip GPU Cache)

- HBM: High bandwidth memory (GPU VRAM)

- GEMV: General Matrix-Vector multiplication

Motivation

FP8 processing has proven effective in the Deep Learning community [1]; however, only specific recent hardware architectures (Ada and Blackwell) support it, limiting its benefits for practitioners and researchers to utilise it. I myself have an `Nvidia RTX 3050 6GB Laptop GPU`, which unfortunately doesn’t support FP8 operations at the hardware level.

Inspired by software-based solutions like (software-accelerated rendering on computers that don’t support native hardware acceleration for gaming), the article proposes an interesting solution that can utilise the power of FP8 datatypes

Packing FP8 & FP16 in FP32 containers

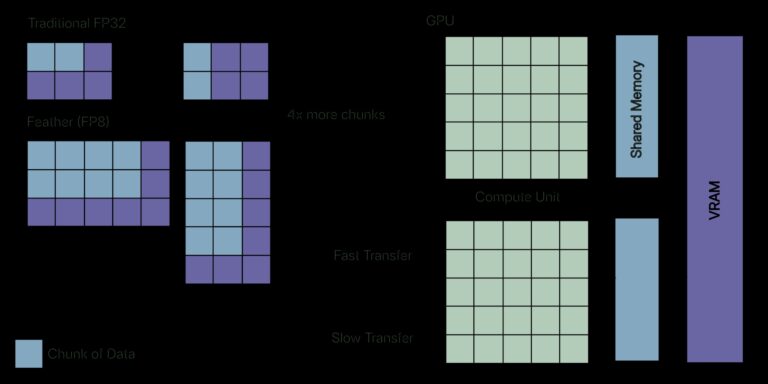

Inspired by bitwise operations and packing techniques, the article presents an algorithm that packs two FP16s or four FP8s into a single FP32. This allows for packing twice or four times the memory, benefiting from a lower memory footprint, while sacrificing only a small amount of precision.

One might argue that we’re performing redundant computation, “Pack -> Load -> Unpack -> Compute.” However, consider Deep Learning operations; Most of the time, these operations are memory-bound rather than compute-bound. This is the same bottleneck that algorithms like FlashAttention address; however, FlashAttention utilises tiling to keep data in fast SRAM, whereas Feather compresses data to reduce memory traffic.

GPU Memory Hierarchy

Take a look at this diagram. SRAM is the fastest accessible GPU memory region and has the highest bandwidth (excluding the register itself), but is limited to only 20MB. HBM can be viewed as the VRAM of the GPU itself, which has approximately 1/7th the bandwidth of SRAM.

The GPU cores are fast enough to complete the computation instantly, but they spend most of their time sitting idle, waiting for the data to finish loading and writing back. This is what I mean by memory-bound: the bottleneck here isn’t the math, but the data transfer between the hierarchy of memory in the GPU.

Lower Precision Types & Bandwidth

Most of the time, values during computation are limited to ranges around zero due to normalisation. Engineers developed lower-precision types such as FP8 and FP16, which allow for higher bandwidth. One might be confused about how lowering the precision allows for higher bandwidth. If we take a closer look, we’re effectively loading two values in the place of one for the FP16 type and four values in the place of one for the FP8 type. We’re trading off precision for higher bandwidth to tackle memory-bound operations.

Hardware Level Support

Just like AVX-512 instructions, which are supported only on a limited number of hardware platforms, FP8 and FP16 instructions and registers are also limited by hardware and are available only on the recent ones. If you are on an RTX-30 or RTX-20 series GPU from Nvidia, then you will not be able to take advantage of this lower precision FP8 type. This is exactly the problem that Feather attempts to solve.

Packing Method

Using bitwise operators, one can easily pack the FP16 type into a FP32. The algorithm is described below.

Packing FP16

- Cast the input FP32 into a FP16; this step can be performed with ease using numpy’s astype function.

- Cast them to U16 and then to U32; this sets the upper 16 bits to 0s and lower 16 bits to the actual FP16.

- Shift one of them by 16 using the bitwise LSHIFT operator, and combine both of them using the bitwise OR operator.

Unpacking FP16

- Extract the lower 16 bits using the bitwise AND operator and mask 0xFFFF.

- Extract the upper 16 bits using the RSHIFT operation by 16 and then perform a bitwise AND operation with the mask 0xFFFF.

- Cast both U16 values back to FP16 and to FP32 if needed.

Packing FP8

FP8 has two widely used formats – E5M2 & E4M3. One cannot use the same algorithm used for packing two FP16 into FP32 because the CPU doesn’t support FP8 types natively, but does for FP16 (half precision); this is the reason that np.float8 doesn’t exist.

Casting an FP16 to FP8-E5M2 is straightforward, as seen in the figure, because both have the same number of exponent bits and differ only in their fraction.

FP8-E5M2 Packing

- Cast the input FP32 into a FP16; this step can be performed with ease using numpy’s astype function, or get the input itself as FP16.

- Cast to U16, LSHIFT by 8, then RSHIFT by 8 to isolate the upper 8 bits

- Do this for all four FP32s or FP16s.

- Now using the LSHIFT operator, shift them by 0, 8, 16 and 24 units and combine them using the bitwise OR operator.

Once again, unpacking should be straightforward; it is the exact opposite of packing.

Packing an FP8-E4M3 is not as easy and straightforward as packing an FP16 or FP8-E5M2, due to the exponent bits mismatch.

Instead of implementing it from scratch, the library uses the ml_dtypes library, which already does the casting math.

The ml_dtypes library provides support for commonly used FP8 standards, such as E5M2 and E4M3 casting, for NumPy arrays. Using the same astype function, we can perform casting just as we did for FP16 types. The Algorithm is exactly identical to how we pack FP16, so I’m skipping it here.

Triton GPU Kernels

After we pack, we need an algorithm (kernel) to utilise this packed datatype and perform the computation. Passing the packed datatype to a kernel implemented for FP32 or FP64 will result in undefined computation because we have already corrupted the FP32 or FP64 being passed. Writing a kernel that takes the packed datatype as input in CUDA is not a straightforward task and is error-prone. This is exactly where Triton shines; it is a Domain-Specific Language library that leverages a custom intermediate representation for GPU kernels. In layman’s terms, it allows one to write GPU kernels in Python itself without the need to write CUDA kernels in C.

Triton kernels do exactly what was mentioned previously; the algorithm is as follows:

- Load the packed array into memory

- Unpack the memory and upcast it to FP32 for accumulation tasks

- Perform the computation

The reader should note that when performing the computation, upcasting is used to prevent overflows. Therefore, from a computational perspective, there is no advantage. However, from the perspective of bandwidth, we’re loading memory twice or four times without compromising the bandwidth.

Triton Kernel Implementation (pseudocode)

@triton.jit

def gemv_fp8_kernel(packed_matrix_ptr, packed_vector_ptr, out_ptr):

# Get current row to process

row_id = get_program_id()

# Initialize accumulator for dot product

accumulator = 0

# Iterate over row in blocks

for each block in row:

# Load packed FP32 values (each contains 4 FP8s)

packed_matrix = load(packed_matrix_ptr)

packed_vector = load(packed_vector_ptr)

# Unpack the FP32 into 4 FP8 values

m_a, m_b, m_c, m_d = unpack_fp8(packed_matrix)

v_a, v_b, v_c, v_d = unpack_fp8(packed_vector)

# Upcast to FP32 and compute partial dot products

accumulator += (m_a * v_a) + (m_b * v_b) + (m_c * v_c) + (m_d * v_d)

# Store final result

store(out_ptr, accumulator)Results

Hardware: NVIDIA GeForce RTX 3050 6GB VRAM

CUDA Version: 13.0

Python Version: 3.13.9

GEMV Benchmark (M = 16384, N = 16384) (MxN matrix)

| Implementation | Time (microseconds) | Speedup |

| Pytorch (FP32) | 5,635 | (Baseline) |

| Feather (FP8-E4M3) | 2,703 | 2.13x |

| Feather (FP8-E5M2) | 1,679 | 3.3x |

The theoretical performance boost that can be achieved is 4x; 3.3x is very good in comparison, with the remaining overhead primarily stemming from pack/unpack operations and kernel launch costs.

E5M2 is faster than E4M3 due to the easier unpacking, but E4M3 offers better precision. However, it is significantly more complex to unpack (Feather uses a separate GPU kernel to unpack the E4M3 format).

Flash Attention Benchmark (Sequence Length = 8192, Embedding Dimension = 512)

| Implementation | Time (microseconds) | Speedup |

| Pytorch (FP32) | 33,290 | (Baseline) |

| Feather (FP8-E5M2) | 9,887 | ~3.3x |

Accuracy & Precision

Testing with random matrices (integer distributions in the range [-3, 3] and standard normal distributions) shows that both E4M3 and E5M2 maintain numerical results within practical tolerances for deep learning operations. The accumulation errors remain manageable for typical workload sizes; however, users requiring strict numerical precision should validate their specific use case.

When should you use Feather?

Use cases for Feather are not limited; one can use Feather wherever FP8 packing and unpacking have an advantage, such as

- Large matrix-vector products, where loading and unloading are the bottlenecks.

- Attention-like memory-bound kernels.

- Inference or fine-tuning on native RTX 30 or 20 series.

- Batch processing, where packing overhead is amortised

When should you not use Feather?

- You have RTX 40-series or H100 GPUs (native FP8 is faster).

- Workloads are compute-bound rather than bandwidth- or memory-bound.

- You need guaranteed precision.

Limitations of Feather

Feather is currently in the early stages of prototyping with several areas for improvement.

- Limited support for operations; currently, Feather supports only the dot product, GEMV subroutine and FlashAttention.

- Accuracy validation for complete ML workloads; currently, Feather’s accuracy is validated only for operations, not for end-to-end ML workloads.

- Integration is currently limited; Feather is a standalone implementation. Integration with PyTorch and support for autograd would make it more production-ready.

The project is open source; community contributions are welcome! You can try out the code by simply following the instructions on GitHub.

Image License: All the images are made by the author. Adaptation sources are clearly mentioned in respective captions.