you are analyzing a small dataset:

\[X = [15, 8, 13, 7, 7, 12, 15, 6, 8, 9]\]

You want to calculate some summary statistics to get an idea of the distribution of this data, so you use numpy to calculate the mean and variance.

import numpy as np

X = [15, 8, 13, 7, 7, 12, 15, 6, 8, 9]

mean = np.mean(X)

var = np.var(X)

print(f"Mean={mean:.2f}, Variance={var:.2f}")Your output Looks like this:

Mean=10.00, Variance=10.60Great! Now you have an idea of the distribution of your data. However, your colleague comes along and tells you that they also calculated some summary statistics on this same dataset using the following code:

import pandas as pd

X = pd.Series([15, 8, 13, 7, 7, 12, 15, 6, 8, 9])

mean = X.mean()

var = X.var()

print(f"Mean={mean:.2f}, Variance={var:.2f}")Their output looks like this:

Mean=10.00, Variance=11.78The means are the same, but the variances are different! What gives?

This discrepancy arises because numpy and pandas use different default equations for calculating the variance of an array. This article will mathematically define the two variances, explain why they differ, and show how to use either equation in different numerical libraries.

Two Definitions

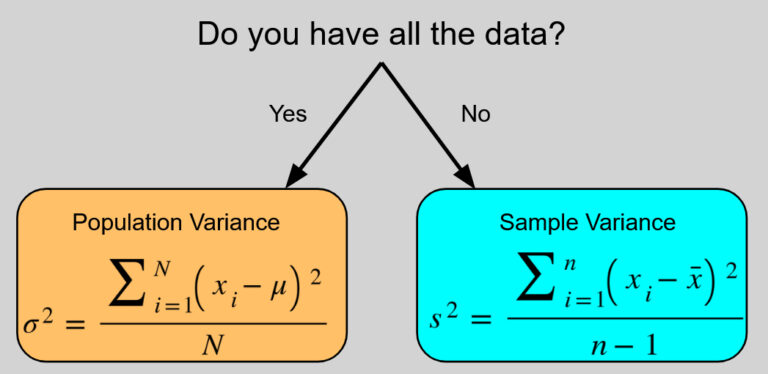

There are two standard ways to calculate the variance, each meant for a different purpose. It comes down to whether you are calculating the variance of the entire population (the complete group you are studying) or just a sample (a smaller subset of that population you actually have data for).

The population variance, , is defined as:

\[\sigma^2 = \frac{\sum_{i=1}^N(x_i-\mu)^2}{N}\]

While the sample variance, , is defined as:

\[s^2 = \frac{\sum_{i=1}^n(x_i-\bar x)^2}{n-1}\]

(Note: represents each individual data point in your dataset. represents the total number of data points in a population, represents the total number of data points in a sample, and is the sample mean).

Notice the two key differences between these equations:

- In the numerator’s sum, is calculated using the population mean, , while is calculated using the sample mean, .

- In the denominator, divides by the total population size , while divides by the sample size minus one, .

It should be noted that the distinction between these two definitions matters the most for small sample sizes. As grows, the distinction between and becomes less and less significant.

Why Are They Different?

When calculating the population variance, it is assumed that you have all the data. You know the exact center (the population mean ) and exactly how far every point is from that center. Dividing by the total number of data points gives the true, exact average of those squared differences.

However, when calculating the sample variance, it is not assumed that you have all the data so you do not have the true population mean . Instead, you only have an estimate of , which is the sample mean . However, it turns out that using the sample mean instead of the true population mean tends to underestimate the true population variance on average.

This happens because the sample mean is calculated directly from the sample data, meaning it sits in the exact mathematical center of that specific sample. As a result, the data points in your sample will always be closer to their own sample mean than they are to the true population mean, leading to an artificially smaller sum of squared differences.

To correct for this underestimation, we apply what is called Bessel’s correction (named for German mathematician Friedrich Wilhelm Bessel), where we divide not by , but by the slightly smaller to correct for this bias, as dividing by a smaller number makes the final variance slightly larger.

Degrees of Freedom

So why divide by and not or or any other correction that also increases the final variance? That comes down to a concept called the Degrees of Freedom.

The degrees of freedom refers to the number of independent values in a calculation that are free to vary. For example, imagine you have a set of 3 numbers, . You do not know what the values of these are but you do know that their sample mean .

- The first number could be anything (let’s say 8)

- The second number could also be anything (let’s say 15)

- Because the mean must be 10, is not free to vary and must be the one number such that , which in this case is 7.

So in this example, even though there are 3 numbers, there are only two degrees of freedom, as enforcing the sample mean removes the ability of one of them to be free to vary.

In the context of variance, before making any calculations, we start with degrees of freedom (corresponding to our data points). The calculation of the sample mean essentially uses up one degree of freedom, so by the time the sample variance is calculated, there are degrees of freedom left to work with, which is why is what appears in the denominator.

Library Defaults and How to Align Them

Now that we understand the math, we can finally solve the mystery from the beginning of the article! numpy and pandas gave different results because they default to different variance formulas.

Many numerical libraries control this using a parameter called ddof, which stands for Delta Degrees of Freedom. This represents the value subtracted from the total number of observations in the denominator.

- Setting

ddof=0divides the equation by , calculating the population variance. - Setting

ddof=1divides the equation by , calculating the sample variance.

These can also be applied when calculating the standard deviation, which is just the square root of the variance.

Here is a breakdown of how different popular libraries handle these defaults and how you can override them:

numpy

By default, numpy assumes you are calculating the population variance (ddof=0). If you are working with a sample and need to apply Bessel’s correction, you must explicitly pass ddof=1.

import numpy as np

X = [15, 8, 13, 7, 7, 12, 15, 6, 8, 9]

# Sample variance and standard deviation

np.var(X, ddof=1)

np.std(X, ddof=1)

# Population variance and standard deviation (Default)

np.var(X)

np.std(X)pandas

By default, pandas takes the opposite approach. It assumes your data is a sample and calculates the sample variance (ddof=1). To calculate the population variance instead, you must pass ddof=0.

import pandas as pd

X = pd.Series([15, 8, 13, 7, 7, 12, 15, 6, 8, 9])

# Sample variance and standard deviation (Default)

X.var()

X.std()

# Population variance and standard deviation

X.var(ddof=0)

X.std(ddof=0)Python’s Built-in statistics Module

Python’s standard library does not use a ddof parameter. Instead, it provides explicitly named functions so there is no ambiguity about which formula is being used.

import statistics

X = [15, 8, 13, 7, 7, 12, 15, 6, 8, 9]

# Sample variance and standard deviation

statistics.variance(X)

statistics.stdev(X)

# Population variance and standard deviation

statistics.pvariance(X)

statistics.pstdev(X)R

In R, the standard var() and sd() functions calculate the sample variance and sample standard deviation by default. Unlike the Python libraries, R does not have a built-in argument to easily swap to the population formula. To calculate the population variance, you must manually multiply the sample variance by .

X <- c(15, 8, 13, 7, 7, 12, 15, 6, 8, 9)

n <- length(X)

# Sample variance and standard deviation (Default)

var(X)

sd(X)

# Population variance and standard deviation

var(X) * ((n - 1) / n)

sd(X) * sqrt((n - 1) / n)Conclusion

This article explored a frustrating yet often unnoticed trait of different statistical programming languages and libraries — they elect to use different default definitions of variance and standard deviation. An example was given where for the same input array, numpy and pandas return different values for the variance by default.

This came down to the difference between how variance should be calculated for the entire statistical population being studied vs how variance should be calculated based on just a sample from that population, with different libraries making different choices about the default. Finally it was shown that although each library has its default, they all can be used to calculate both types of variance by using either a ddof argument, a slightly different function, or through a simple mathematical transformation.

Thank you for reading!