to this “Advent Calendar” of Machine learning and deep learning in Excel.

For Day 1, we begin with the k-NN (k-Nearest Neighbors) regressor algorithm. And as you will see, this is really the simplest model and it is a good way to start.

For those who already know this model, here are some questions for you. Hopefully, they will make you want to continue reading. And there are some subtle lessons that are not taught in traditional courses.

- Is scaling of continuous features important for this model?

- How can categorical features be handled?

- What should be done to the continuous features to improve model performance?

- What types of distance measures can be more suitable in certain situations? For example, when predicting house prices where geographical location matters?

Spoiler: with a naive k-NN, you cannot get the ideal scaling automatically.

It is also an opportunity, if you are not familiar with Excel formulas, to use formulas such as RANK, IF, SUMPRODUCT, and other useful Excel functions.

You can use this link to get the Excel/Google Sheet file, and my advice is that you follow the article, and you do some testing with the file to better understand.

The principle of k-NN

If you want to sell or buy an apartment, how would you estimate the price?

Please think about a very realistic approach, not some complex model that you have to spend hours to build.

Something that you can do for real.

Well, you would probably ask your neighbors who have an apartment of the same or similar size. And you calculate the average value of these apartments.

Yes, that is exactly the idea of k-NN, for k-Nearest Neighbors: search for the most similar examples, and use their values to estimate the new one.

To illustrate this task with a concrete example of house pricing estimation, we will use this well-known dataset called California Housing Dataset. This is the Census data from California block groups, used to predict median house value.

Each observation is not an individual house, but it is still interesting to use this example.

Here is a quick description of the variables.

Target variable is MedHouseVal, which is the median house value, in units of 100,000 USD (example: 3.2 means 320,000 dollars).

The feature variables are as follows:

1. MedInc: median income (in units of 10,000 USD)

2. HouseAge: median age of houses

3. AveRooms: average number of rooms per household

4. AveBedrms: average number of bedrooms per household

5. Population: people living in the block group

6. AveOccup: average number of occupants per household

7. Latitude: geographic latitude

8. Longitude: geographic longitude

k-NN with One Continuous Feature

Before we use multiple features to find the neighbors, let’s first only use one feature and a few observations.

Even though the process for one continuous feature will be very simple, we will still follow every step. We first explore our dataset, then we train the model with a hyperparameter, and at last, we can use the model to predict.

Training dataset

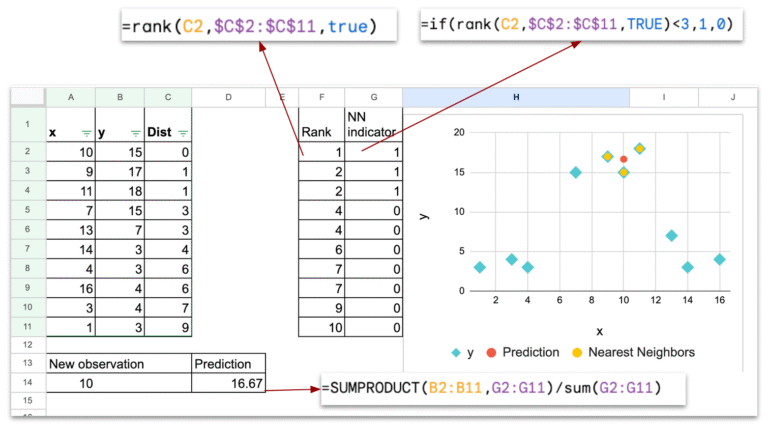

Here is the plot of this simple dataset of 10 observations. The x-axis is the continuous feature, and the y-axis is the target variable.

Now, imagine that we have to predict the value for a new observation x=10. How can we do that?

Model training?

The first step for almost all machine learning models is training.

But for k-NN, your model is your entire dataset. In other words, you don’t have to train the model, you use the original dataset directly.

So in scikit-learn, when you do model.fit, for a k-NN estimator, nothing really happens.

Some may ask: what about k?

Well, k is the hyperparameter. So you have to choose a value for k, and it can be tuned.

Prediction for one new observation

For the hyperparameter k, we will use k=3, since the dataset is very small.

For one feature variable, the distance can trivially be the absolute value of the difference of the value between the new observation and the others.

In the sheet “algo1D”, you can change the value of the new observation, and use the filter on distance column C to order the dataset in the increasing order, the 3-nearest neighbors will be plotted.

To make the calculation more automatic, we can use RANK function to see the smallest observations in terms of distance.

And we also can create a column of indicators (column G), with indicator = 1, if they belong to the k-nearest neighbors.

Finally, for the prediction, we can use SUMPRODUCT to calculate the average value of all y values with indicator =1.

In the plot,

- the light blue dots represent the dataset

- the red dot represents the new observation with the predicted y value

- the yellow dots represent the 3-nearest neighbors of the new observation (in red)

Let’s recap — the prediction phase consists of the following steps:

- For one given new observation, calculate the distance between this new observation and all the observations in the training dataset.

- Identify the k observations that have the shortest distance. In Excel, we will use the filter to order manually the training dataset. Or we can use RANK (and a indicator column) to get the top k observations.

- Calculate the predicted value, by calculating the average value of the target variable, by using SUMPRODUCT.

Prediction for an interval of new observations

In the sheet “algo1D f” (f for final), I plotted the prediction for a list of new observations, ranging from 1 to 17.

With a programming language, we could do it easily in a loop, and for a larger number of new observations, so the representation could be denser.

With Excel, I manually repeated the following steps:

- input a value for x

- order the distance column

- copy-paste the prediction

Effect of the hyperparameter k

The hyperparameter that is used in k-NN is the number of neighbors that we take into account for the calculation of average value.

We usually use this following graph to explain how a model can be underfitted, or overfitted.

In our case, if k is small, there can be a risk of overfitting.

If k is large, there can be risk of underfitting.

The extreme case of very large k is that k can be the total number of the training dataset. And the value of the prediction will be the same for every new observation: it is the global average.

So, we can say that k-NN improves the idea of predicting with a calculation of the average value with a few observations that are close to the new observation.

k-NN with Two Continuous Features

Now, we will study the case of two continuous feature variables x1 and x2. And we will only talk about the differences with the previous situation of one feature variable.

Two continuous feature variables dataset

When we have two feature variables, I cannot plot in 3D with Excel, so the plot contains only x1 as x-axis, and x2 as y-axis.

So don’t be confused with the previous dataset, for which y-axis represents the target value y.

Prediction with the Euclidean distance

Now that we have two features, we have to take both of them into account.

One usual distance we can use is the Euclidean Distance.

Then we can use the same process to the top k observations that have the minimum distance with the new observation.

To get a visual plot, we can use the same colors

- Blue for training dataset

- Red for the new observation

- Yellow for the found k nearest neighbors

Impact of the scale of the variables

When you have two features, one question that we can ask is the impact of the scale of the feature for the result of prediction.

First, let’s see this simple example, I multiplied the feature x2 by 10.

Will this scaling impact the predictions? The answer is of course yes.

And we can easily compare them, as in the following image.

It is easy enough to understand that the Euclidean Distance sums the squared difference of the features, regardless of their scales.

As a result, the feature that has a large scale will dominate the distance.

When it comes to feature scaling, one common operation is standardization (also called centering and reduction) or min–max scaling. The idea is to place all features on a comparable scale.

BUT, let’s think about this situation: what if one feature is expressed in dollars, and the other in yen.

In the real world, the correct relation between the two scales is about 1 dollar = 156 yen (as of November 2025). We know this because we understand the meaning of the units.

How would the model know this? It DOES NOT.

The only hyperparameter is k, and the model does not adjust anything to correct for differences in units or scales. k-NN has no internal mechanism for understanding that two features have different units.

And this is only the begining of the problems…

k-NN with the California Housing dataset

Now, let’s finally use the real-world dataset of California Housing dataset.

With the one-feature dataset, we got the basic idea of how k-NN works. With two-feature dataset, we saw that the scale of features is important.

Now, with this real-world dataset, we will see that the heterogeneous nature of the features make the Euclidean distance meaningless.

We will see some other more important ideas when we use k-NN in practice.

Naive application of k-NN regressor

Since all features in this dataset are continuous, we can easily calculate the Euclidean Distance. And we define a number k, to calculate the average value of the target variable, here MedHouseVal.

In Excel, you can easily do that yourself. Or you can support me here and get all the files.

Notion of distance based on different features

I said that the previous application is naive, because if you look closer, you will see these problems:

MedInc (median income) is expressed in units of 10,000 USD. If we decide to express it in 100,000 USD or in 1,000 USD instead, the prediction will change, because k-NN is sensitive to the scale of the features. We saw this problem before.

Now, moreover, each feature has a different nature.

- MedInc is an amount of money (in dollars).

- HouseAge is an age in years.

- AveRooms is a count of rooms.

- Population is a number of persons.

- Latitude and longitude are geographic coordinates.

Therefore, the Euclidean distance is doomed.

Different types of distances

The most common choice is the Euclidean distance, but it is not the only one.

We can also use Manhattan distance when features represent grid-like movements, and Cosine distance when only the direction matters (as with text embeddings).

Each distance changes how “nearest” is defined, and therefore can change which neighbors KNN selects.

Depending on the data, other distances can be more appropriate.

For example, with latitude and longitude, we can use the real geographical distance (in meters) instead of a simple Euclidean distance on degrees.

In the California Housing dataset, this is especially useful because we have the exact latitude and longitude of each district.

However, once we try to combine these geographical distances with other variables (such as median income, number of rooms, or population), the problem becomes more complex, because the variables have very different natures and scales.

In the cartography renderings below, I used k-NN as a smoothing function to refine the values associated with different areas of Paris.

On the left, each area has only a single value, so from one quarter to its neighboring quarters, there can be a discontinuity of the variable.

On the right, k-NN allows me to estimate a value for every specific address by smoothing the information based on nearby areas.

Moreover, for indicators such as the proportion of certain professional categories, I also applied population-based weighting so that larger areas have a stronger influence in the smoothing process.

As a conclusion, when the situation allows it, choosing a more specific distance can help us better capture the underlying reality.

By linking the distance to the nature of the data, we can make k-NN much more meaningful: geographical distance for coordinates, cosine distance for embeddings, and so on. The choice of distance is not just a technical detail, it changes how the model “sees” the world and which neighbors it considers relevant.

How Categorical Features can be Modeled

You may hear that categorical features cannot be handled in k-NN models.

But this is not completely true.

k-NN can work with categorical variables as long as we can define a distance between two observations.

Many people will say: “just use one-hot encoding.”

Others mention label encoding, or ordinal encoding.

But these methods behave very differently in a distance-based model.

To make this clear, we will use another dataset: the diamond price dataset (CC BY 4.0 license), which contains several features such as carat, cut, color, and clarity.

For simplicity, we will use only carat (numerical) and clarity (categorical) to demonstrate a few results.

Predicting Prices with Carat

First, we will start with carat, since you probably know that the price of a diamond depends mainly on the size (carat) of the stone.

The graphic below shows how k-NN can find diamonds with similar sizes to estimate the price.

One-Hot Encoding for Clarity Feature

Now let us look at clarity.

Below is the table of categories with their meanings, and we apply one-hot encoding to transform each category into a binary vector.

| Clarity | Meaning |

| IF | Internally Flawless |

| VVS1 | Very Very Slightly Included 1 |

| VVS2 | Very Very Slightly Included 2 |

| VS1 | Very Slightly Included 1 |

| VS2 | Very Slightly Included 2 |

| SI1 | Slightly Included 1 |

| SI2 | Slightly Included 2 |

| I1 | Included 1 |

In this table, we see that for the new diamond with clarity VVS2, the nearest neighbors are all diamonds from the same clarity category.

The numerical feature carat has very little influence on the distance, whereas it is a more important feature, as you can see in the price column.

Key issue 1: all categories are equally distant

When using Euclidean distance on one-hot vectors:

- IF vs VVS1 → distance = √2

- IF vs SI2 → distance = √2

- IF vs I1 → distance = √2

Every different category is at exactly the same distance.

This does not reflect the real diamond grading scale.

Key issue 2: scaling problem with continuous variables

Because we combine one-hot clarity with carat (a continuous feature), we face another problem:

- carat values in our example are below 1

- clarity vectors have differences of √2 → clarity dominates the distance calculation

So even small changes in clarity overpower the effect of carat.

This is exactly the same scaling issue we face with multi-continuous features, but even stronger.

Ordinal Encoding for Clarity

Now we can try encoding the Clarity feature with numerical labels. But instead of using the classic labels 1, 2, 3… we use expert-based labels that reflect the real grading scale.

The idea is to translate the clarity levels into values that behave more like a continuous feature, similar to carat, even if clarity is not strictly continuous.

With this expert-based encoding, the distances become more meaningful.

Carat and clarity are now on comparable scales, so neither feature completely dominates the distance calculation.

So, we obtain a better balance between size and clarity when selecting neighbors, which gives more realistic predictions.

Conclusions

In conclusion, the k-NN regressor is a highly non-linear, local estimator. It is so local that only the K closest observations are actually used.

After implementing k-NN regressor in Excel, I think that we can really ask this question: Is the k-NN regressor really a Machine Learning model?

- There is no model training

- When predicting, the selection of the neighbor observations does not depend on the value of target variable

But, it is so easy to understand, that with Excel, we can just implement the whole algorithm. Moreover, we can adjust the distance as we wish.

Businesspeople can see the idea directly: to predict a value, we look at similar observations.

The actual problems with k-NN, and all models that are based on distance:

- the scale of the features

- the heterogeneous nature of features, which makes the sum meaningless

- the specific distance that should be defined in concrete situations

- for categorical features, label/ordinal encoding could be optimized if we could find the optimal scaling.

So in short, the problem is the scaling of the features. We may think that they can be tuned as hyperparameters, but then the tuning would require so much time.

We will see later that it is exactly the motivation behind another family of models.

Here, the notion of scale is also equivalent to the notion of feature importance, because in k-NN the importance of each feature is defined before using the model.

So this is only the beginning of our journey. We will discover together other models that can do better, from this simple model, by improving in different directions: feature scaling, from distance to probability, splitting to better model each category…