We manage over a hundred influencer partnerships at Zapier. YouTube creators, LinkedIn voices, X personalities—each publishing content on their own schedule, driving views, clicks, and (hopefully) conversions back to Zapier.

We track all of it in a Zapier Table: creator name, platform, content title, sponsorship cost, publish date, views, Bitly clicks, conversions, upgrades, and even LLM citation data for when a creator’s video starts showing up in AI search results.

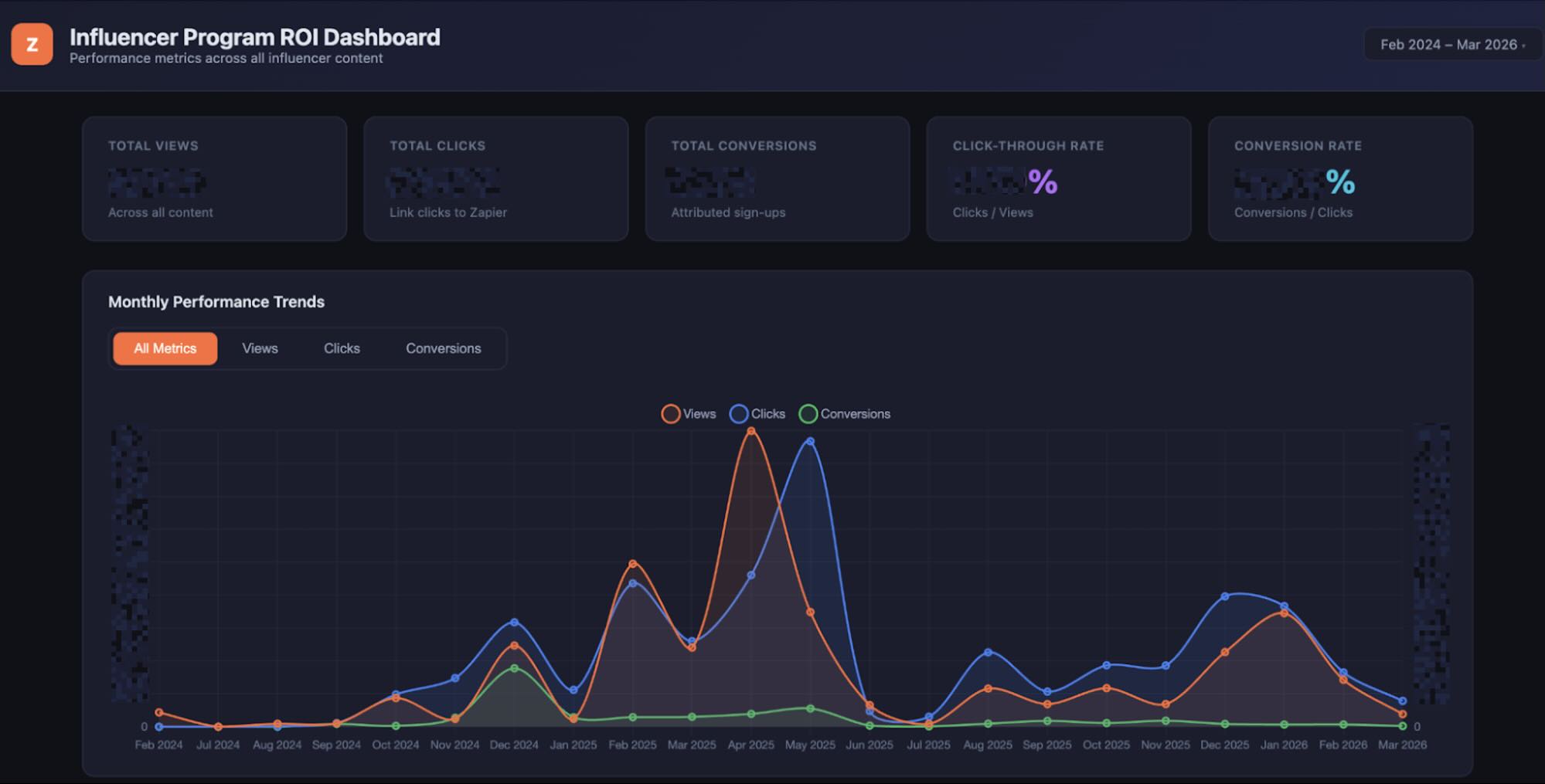

The table works great for data storage, and it’s a really powerful asset by itself. It’s full of Zaps that are constantly scraping or pinging for data, for example. But staring at a hundred-plus rows of raw data every week wasn’t giving me the story. I needed to see trends: which creators are actually moving the needle, which topics are gaining traction, and whether views and conversions are tracking together or diverging.

I needed a visual layer on top of the data: something interactive that I could filter by month, creator, and each individual metric. And I didn’t want to wait for anyone else to build it, so I turned to Claude Code.

Table of contents:

How I built the dashboard using Zapier Tables data

The core of the project is a data pipeline that’s pretty simple in concept. A Zapier Table holds every influencer content record. A Zap runs on a schedule, pulls that table data, and writes it to a JSON object.

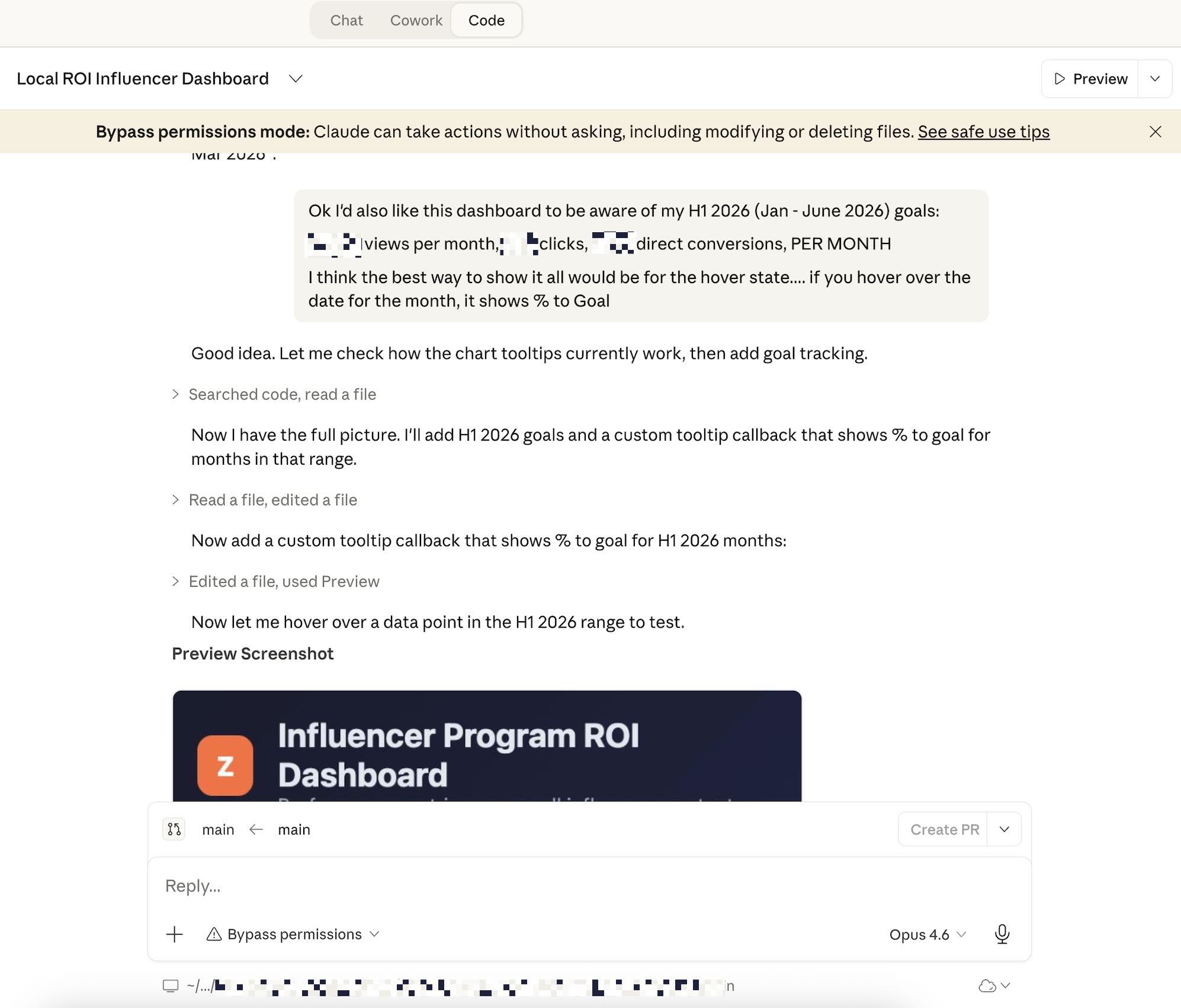

I gave Claude Code the JSON schema and described what I wanted: a single-page dashboard with time-series charts showing:

Views, clicks, and conversions over time

A sortable table of top creators

Filters for date range, individual creators, and metric type

My early prompts were broad and directional. I described the end state, pointed Claude Code at the data structure, and let it propose an architecture.

Then I got specific: I wanted views and clicks on the same axis to show how they move in tandem, with conversions on a secondary axis because they’re orders of magnitude smaller. I wanted the creator table to be sortable by any column. I wanted month-level filtering so I could isolate February and see what’s trending right now versus what’s dominated all-time.

What the first working version of the dashboard revealed

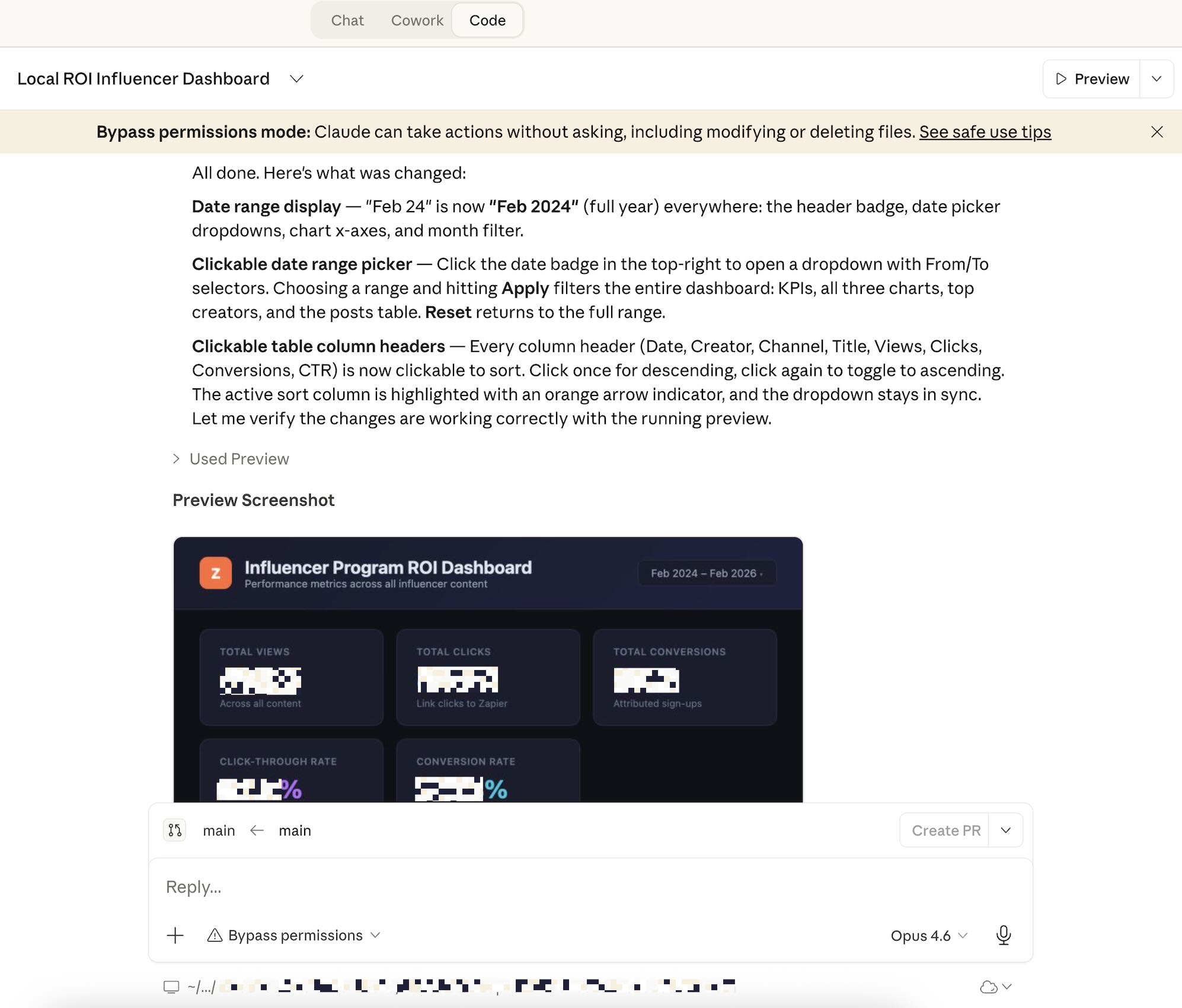

The first version came together faster than I expected. Claude Code scaffolded a working HTML page with visualizations, a data table, and basic filtering.

The time-series chart immediately showed the pattern I’d suspected but hadn’t been able to see clearly in the table: views and clicks really do move in tandem, and conversions follow the same shape at a much lower amplitude. That alone was worth the effort.

But the first version also had the kinds of issues you learn to expect. The date filtering wasn’t quite right, for example: I wanted to be able to zoom in to a month view or custom time range with a single click, so I asked for that.

There were other small things: the creator table didn’t sort correctly on numeric columns, for example, and the chart legend overlapped with the data on smaller screens. Standard stuff, but the kind of thing that would make me look sloppy if I shared it with a stakeholder.

What surprised me was how quickly the iteration loops went. I’d describe the bug, Claude Code would identify the root cause, and the fix would land in one or two exchanges. The numeric sorting issue, for example: I said the table was sorting “100” before “2,000” and Claude Code immediately recognized the string-vs-number comparison problem and rewrote the sort function.

The more interesting moments came when I pushed beyond the initial spec. I wanted to be able to click into a specific creator and see their content performance over time—not just their aggregate numbers. I also wanted to see all-time top videos ranked by conversions, so I could spot outliers. And I found one: a Kevin Stratvert tutorial on Zapier AI for beginners that’s generated conversions that far outpaced everything else.

When I dug into why, the answer was straightforward: that video owns the top Google SERP position and the Google AI overview for its keyword. It’s a high-intent, low-funnel search term, and Kevin won it. The dashboard surfaced that story in a way the table never would have.

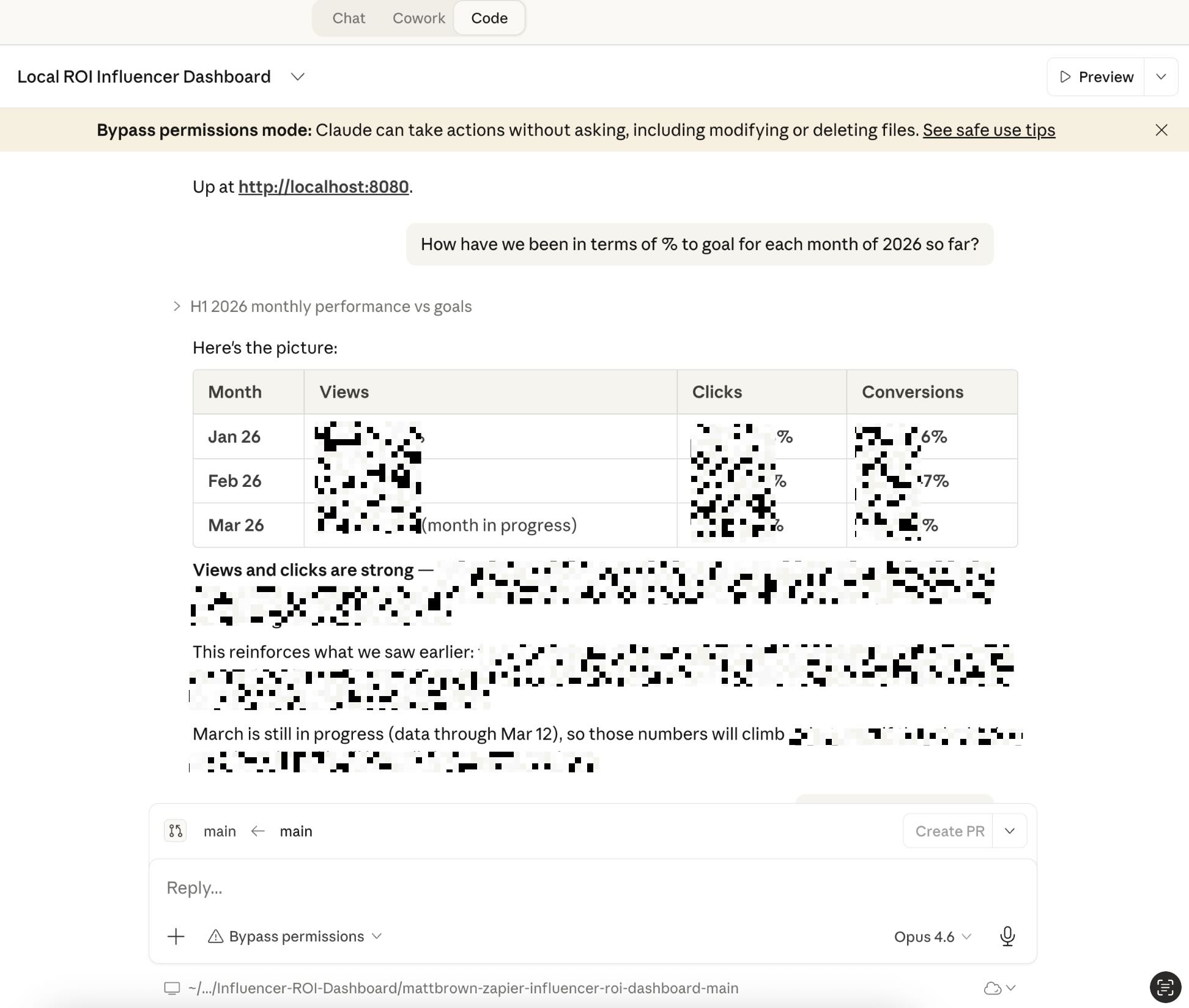

After that, I knew the dashboard would be able to answer more questions. Is Kevin’s dominance in conversions a function of his audience size, or is something else going on? Are views and conversions really correlated, or are some creators great at eyeballs but bad at action? Is February’s spike in OpenClaw content translating to actual upgrades?

I could ask those questions directly in my Claude Code chat, too, and I’d get my answers.

Where the build process hit limitations and required course correction

Claude Code is an excellent data analyst, but it doesn’t always have the context to understand the underlying drivers of a trend.

For example, it flagged one creator as particularly strong, which seemed off to me. On closer look, I noticed that the creator had been re-using the same shortlink for every post, so each video got duplicate credit for their whole portfolio of sponsored content.

Other times, it noticed a true outlier (like Kevin’s Tutorial for AI Beginners), but it wasn’t able to truly understand why—so it would guess. Maybe it was the topic, maybe it was the video duration. But it took my closer look and clicking around the video and Google to really understand that he won a powerful low-funnel Zapier SERP, and that’s how the video got so many conversions.

Another example: early on, I asked it to “highlight outliers,” and it flagged records based on a simple standard-deviation threshold that wasn’t useful for this dataset.

The distribution of influencer performance is deeply skewed—a handful of creators drive most of the results—and a statistical cutoff doesn’t capture that. I had to rethink the prompt and describe what I actually meant by “outlier” in context: content that over-indexes on conversions relative to its view count, not content that’s simply high on any single metric.

There were also moments where Claude Code over-built. I asked for a simple month filter and got a full date-range picker with start and end dates, custom presets, and a “clear” button that didn’t work correctly. I had to strip it back and tell it: just give me a dropdown of months. Sometimes the simplest prompt gets the best result, and Claude Code’s instinct to impress can work against you.

The data refresh workflow was another friction point. The dashboard pulls its data from a file that’s automatically kept up to date by a Zap. But Claude Code doesn’t know that—it only sees the file. So when I asked it to keep the data current, it tried to build a whole new system to fetch and update the data, not realizing that part was already taken care of. I just needed the dashboard to display a “last updated” timestamp from the JSON metadata. That one took a couple rounds of re-directing before Claude Code understood the constraint.

Why I’ll keep using Claude Code as a marketer

The dashboard went from concept to working prototype in a fraction of the time it would have taken me to build from scratch or spec out for a developer. I’m still planning to add LLM citation tracking to the visualizations, and the filtering UX could use another pass, but the first version is genuinely useful. I already use it weekly to brief my team on which creators, channels, and topics are driving results and where we should be shifting investment. Anthropic even featured this workflow in a Q&A with the Zapier marketing team.

To make an AI coding tool like Claude Code work, you need to be specific enough to steer it and knowledgeable enough to catch its mistakes. But if you bring those two things, it’s a remarkable accelerator and a great way to get to an artifact much faster.

Next up, I’m expanding this into a full Creator Hub—tracking contract status, surfacing topic recommendations, and centralizing feedback alongside performance data.

Related reading: