Last May, Zapier announced a minimum AI fluency bar for every new hire and launched V1 of Zapier’s AI Fluency Rubric. This was part of a larger talent investment to move from ad hoc AI adoption to embedded AI across every aspect of how we work.

Over the past year, we assessed every candidate on our initial AI Fluency Rubric. We rebuilt new hire onboarding to emphasize identifying opportunities, building AI-powered workflows, and embracing a “builder mindset” from day one. We expanded learning programs, scaled an already-long list of approved AI tools, and extended our Employee Resource Groups (ERGs) vision to include product-based training and community-led experimentation, creating more pathways to build with AI across all roles. Our evolved performance expectations include how we elevate our work with AI.

Since then, AI usage at Zapier has exploded—to 100% adoption as teams across every function have moved from personal experimentation to redesigning teams and workflows with an AI-first lens.

Our understanding of what is needed for Zapier to keep leading in this environment—and helping our customers do the same—has evolved.

This V2 rubric reflects what we’ve learned, and raises the bar for what we expect from new hires on day one.

How we measure AI fluency at Zapier

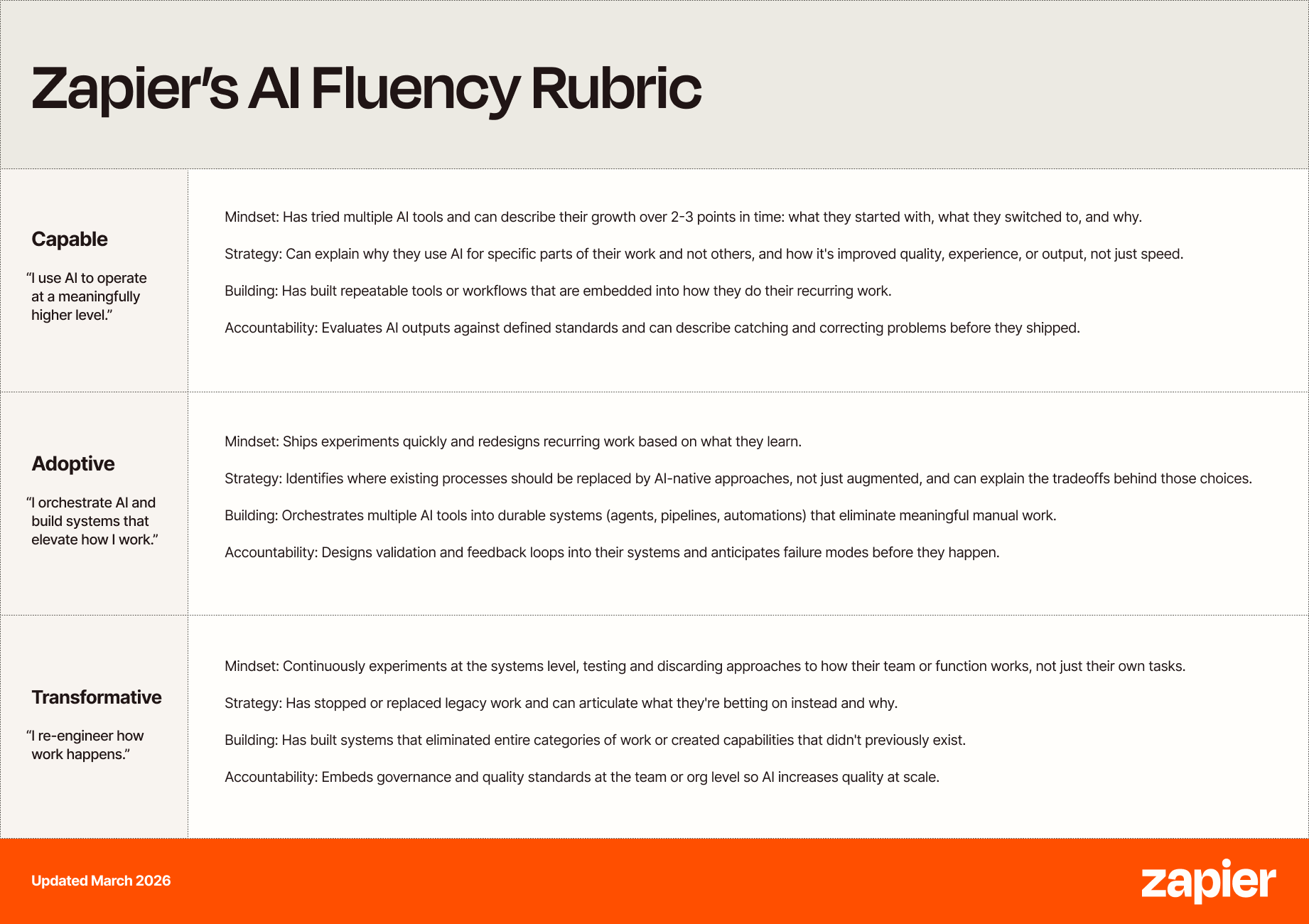

We map AI fluency skills across four levels, keeping in mind that these skills vary by role:

We’ve built in four consistent moments across the candidate journey to measure AI fluency:

Evaluating AI skills through screenings, async exercises, and live interviews allows AI fluency signals to compound across stages. Importantly, we’re boosting support for candidates to prepare for this higher expectation.

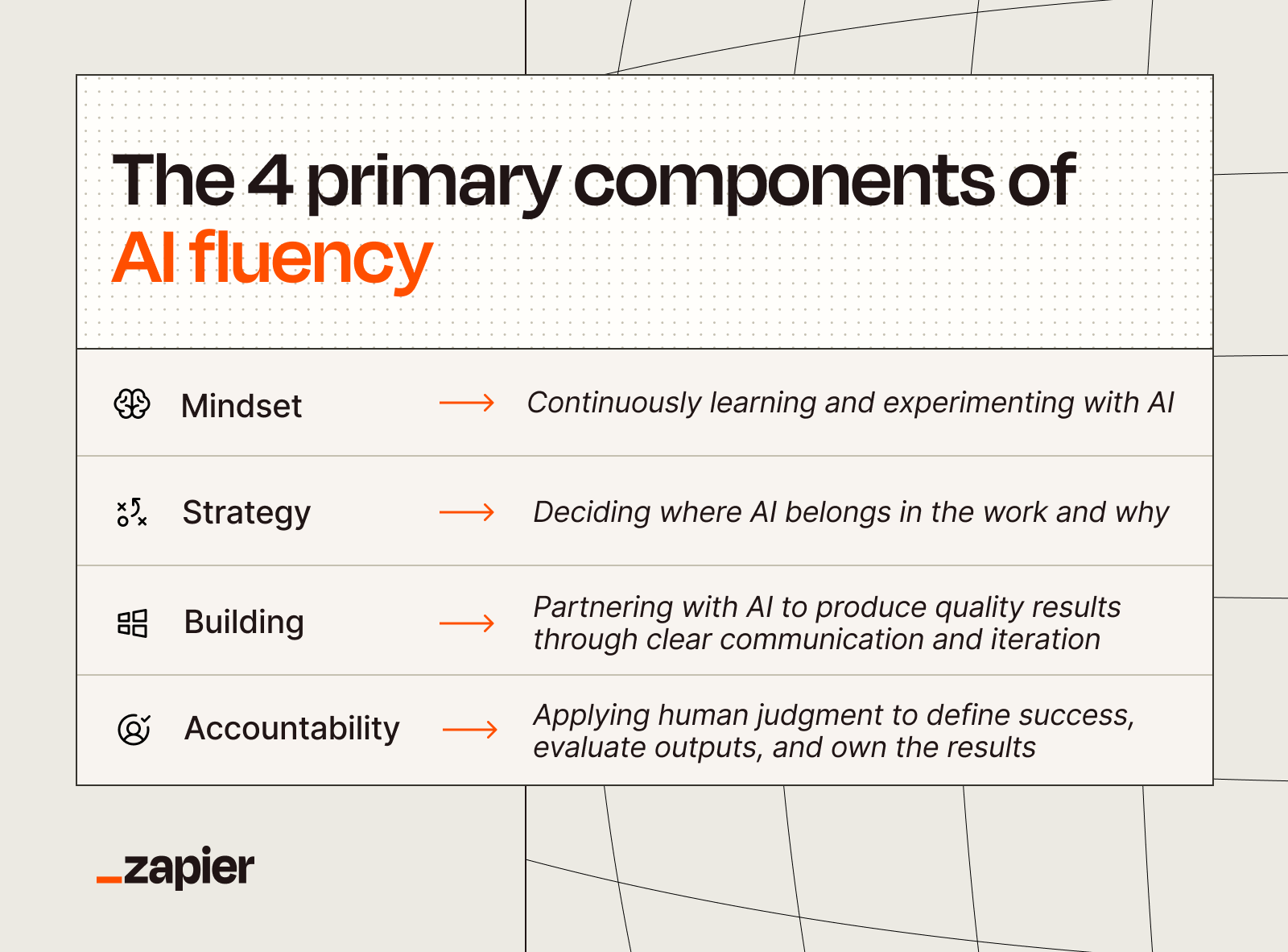

Across these touchpoints, we assess four components: AI mindset, strategy, building, and accountability.

What’s changing in our V2 AI Fluency Rubric

1. Raising the minimum bar (“Capable”) for new hires

Until now, Capable meant you’d used AI with purpose and could describe the impact. To meet our new minimum bar, candidates will need to clearly show:

AI embedded into their core work

Repeatable systems, not one-off prompts

Clear impact on quality, efficiency, or related outcomes

If someone isn’t meaningfully improving their work with the support of AI, they don’t meet the bar.

Here are a few concrete examples of what that bar looks like, broken down by department.

2. Assessing AI fluency slope, not a snapshot

Where someone is today on AI fluency matters. How they got there—the “slope” of their AI fluency journey—matters even more, because it gives us a better signal on where they’ll be in six months.

We now explicitly look for an AI fluency trendline: what did they start with, what did they try and abandon, how has their approach evolved? Someone who plateaued eight months ago on the same three tools is a different candidate than someone actively experimenting and building on what they’ve learned. The signal we’re looking for is forward momentum, including during the hiring process itself.

3. Adding accountability as an explicit fourth component of AI fluency

Zapier’s original AI fluency components were mindset, strategy, and building. We’ve added accountability as a fourth signal. As AI gets more capable, the risks of low-accountability AI use are growing. We need to hire people who define what “good” looks like before they start, evaluate outputs critically, catch what’s wrong before it ships, and own the outcomes of their AI workflows.

As we say: “With AI, you can delegate the work, but not the accountability.”

4. Requiring managers to demonstrate how they led teams to adopt AI

Individual contributors need to show AI embedded into their own work. Managers need to show that and more. A manager who is personally fluent but whose team is still doing things the old way doesn’t meet our bar. We’re looking for managers who:

Create psychological safety for teams to experiment with AI

Set clear expectations and make space for AI upskilling

Model change management through real implementation

Redesign workflows so AI meaningfully changes how team work gets done

5. Redesigning skills tests to observe how people use AI in practice

Research from Anthropic’s AI Fluency Index shows that the people who demonstrate the strongest fluency signals are those who iterate and use AI as a thought partner, not those who simply reach for the most tools. That’s directly shaping how we assess candidates.

In our revamped skills tests, we observe candidates working with AI in real time. We want to see how they prompt, push back on an output, and adapt. A rough result with strong reasoning and real iteration is a better signal than a polished one with no visible process behind it.

What’s next

As AI technology and our expectations evolve, so will our bar.

As our internal Zapier teammates continue to reimagine new ways of working possible with AI, we’ll continue to share what we’re learning along the way.

Our aim is to unlock a new level of productivity, creativity, and innovation, a deeper transformation in how we operate, grow, and scale. But we think this matters for everyone, which is why we’ve committed to help one million people take their first step to learn AI Automation.

As other teams navigate similar journeys, we’d love to learn from you.