This article is the third part of a series I decided to write on how to build a robust and stable credit scoring model over time.

The first article focused on how to construct a credit scoring dataset, while the second explored exploratory data analysis (EDA) and how to better understand borrower and loan characteristics before modeling.

, my final year at an engineering school. As part of a credit scoring project, a bank provided us with data about individual customers. In a previous article, I explained how this type of dataset is usually constructed.

The goal of the project was to develop a scoring model that could predict a borrower’s credit risk over a one-month horizon. As soon as we received the data, the first step was to perform an exploratory data analysis. In my previous article, I briefly explained why exploratory data analysis is essential for understanding the structure and quality of a dataset.

The dataset provided by the bank contained more than 300 variables and over one million observations, covering two years of historical data. The variables were both continuous and categorical. As is common with real-world datasets, some variables contained missing values, some had outliers, and others showed strongly imbalanced distributions.

Since we had little experience with modeling at the time, several methodological questions quickly came up.

The first question was about the data preparation process. Should we apply preprocessing steps to the entire dataset first and then split it into training, test, and OOT (out-of-time) sets? Or should we split the data first and then apply all preprocessing steps separately?

This question is important. A scoring model is built for prediction, which means it must be able to generalize to new observations, such as new bank customers. As a result, every step in the data preparation pipeline, including variable preselection, must be designed with this objective in mind.

Another question was about the role of domain experts. At what stage should they be involved in the process? Should they participate early during data preparation, or only later when interpreting the results? We also faced more technical questions. For example, should missing values be imputed before treating outliers, or the other way around?

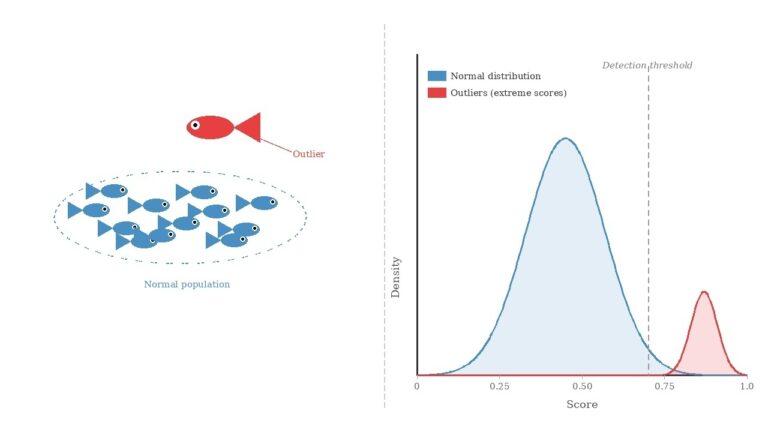

In this article, we focus on a key step in the modeling process: handling extreme values (outliers) and missing values. This step can sometimes also contribute to reducing the dimensionality of the problem, especially when variables with poor data quality are removed or simplified during preprocessing.

I previously described a related process in another article on variable preprocessing for linear regression. In practice, the way variables are processed often depends on the type of model used for training. Some methods, such as regression models, are sensitive to outliers and generally require explicit treatment of missing values. Other approaches can handle these issues more naturally.

To illustrate the steps presented here, we use the same dataset introduced in the previous article on exploratory data analysis. This dataset is an open-source dataset available on Kaggle: the Credit Scoring Dataset. It contains 32,581 observations and 12 variables describing loans issued by a bank to individual borrowers.

Although this example involves a relatively small number of variables, the preprocessing approach described here can easily be applied to much larger datasets, including those with several hundred variables.

Finally, it is important to remember that this type of analysis only makes sense if the dataset is high quality and representative of the problem being studied. In practice, data quality is one of the most critical factors for building robust and reliable credit scoring models.

This post is part of a series dedicated to understanding how to build robust and stable credit scoring models. The first article focused on how credit scoring datasets are constructed. The second article explored exploratory data analysis for credit data. In the following section, we turn to a practical and essential step: handling outliers and missing values using a real credit scoring dataset.

Creating a Time Variable

Our dataset does not contain a variable that directly captures the time dimension of the observations. This is problematic because the goal is to build a prediction model that can estimate whether new borrowers will default. Without a time variable, it becomes difficult to clearly illustrate how to split the data into training, test, and out-of-time (OOT) samples. In addition, we cannot easily assess the stability or monotonic behavior of variables over time.

To address this limitation, we create an artificial time variable, which we call year.

We construct this variable using cb_person_cred_hist_length, which represents the length of a borrower’s credit history. This variable has 29 distinct values, ranging from 2 to 30 years. In the previous article, when we discretized it into quartiles, we observed that the default rate remained relatively stable across intervals, around 21%.

This is exactly the behavior we want for our year variable: a relatively stationary default rate, meaning that the default rate remains stable across different time periods.

To construct this variable, we make the following assumption. We arbitrarily suppose that borrowers with a 2-year credit history entered the portfolio in 2022, those with 3 years of history in 2021, and so on. For example, a value of 10 years corresponds to an entry in 2014. Finally, all borrowers with a credit history greater than or equal to 11 years are grouped into a single category corresponding to an entry in 2013.

This approach gives us a dataset covering an approximate historical period from 2013 to 2022, providing about ten years of historical data. This reconstructed timeline enables more meaningful train, test, and out-of-time splits when developing the scoring model. And also to study the stability of the risk driver distribution over time.

Training and Validation Datasets

This section addresses an important methodological question: should we split the data before performing data treatment and variable preselection, or after?

In practice, machine learning methods are commonly used to develop credit scoring models, especially when a sufficiently large dataset is available and covers the full scope of the portfolio. The methodology used to estimate model parameters must be statistically justified and based on sound evaluation criteria. In particular, we must account for potential estimation biases caused by overfitting or underfitting, and select an appropriate level of model complexity.

Model estimation should ultimately rely on its ability to generalize, meaning its capacity to correctly score new borrowers who were not part of the training data. To properly evaluate this ability, the dataset used to measure model performance must be independent from the dataset used to train the model.

In statistical modeling, three types of datasets are typically used to achieve this objective:

- Training (or development) dataset used to estimate and fit the parameters of the model.

- Validation / Test dataset (in-time) used to evaluate the quality of the model fit on data that were not used during training.

- Out-of-time (OOT) validation dataset used to assess the model’s performance on data from a different time period, which helps evaluate whether the model remains stable over time.

Other validation strategies are also commonly used in practice, such as k-fold cross-validation or leave-one-out validation.

Dataset Definition

In this section, we present an example of how to create the datasets used in our analysis: train, test, and OOT.

The development dataset (train + test) covers the period from 2013 to 2021. Within this dataset:

- 70% of the observations are assigned to the training set

- 30% are assigned to the test set

The OOT dataset corresponds to 2022.

train_test_df = df[df["year"] <= 2021].copy()

oot_df = df[df["year"] == 2022].copy()

train_test_df.to_csv("train_test_data.csv", index=False)

oot_df.to_csv("oot_data.csv", index=False)Preserving Model Generalization

To preserve the model’s ability to generalize, once the dataset has been split into train, test, and OOT, the test and OOT datasets must remain completely untouched during model development.

In practice, they should be treated as if they were locked away and only used after the modeling strategy has been defined and the candidate models have been trained. These datasets will later allow us to compare model performance and select the final model.

One important point to keep in mind is that all preprocessing steps applied to the training dataset must be replicated exactly on the test and OOT datasets. This includes:

- handling outliers

- imputing missing values

- discretizing variables

- and applying any other preprocessing transformations.

Splitting the Development Dataset into Train and Test

To train and evaluate the different models, we split the development dataset (2013–2021) into two parts:

- a training set (70%)

- a test set (30%)

To ensure that the distributions remain comparable across these two datasets, we perform a stratified split. The stratification variable combines the default indicator and the year variable:

def_year = def + year

This variable allows us to preserve both the default rate and the temporal structure of the data when splitting the dataset.

Before performing the stratified split, it is important to first examine the distribution of the new variable def_year to verify that stratification is feasible. If some groups contain too few observations, stratification may not be possible or may require adjustments.

In our case, the smallest group defined by def_year contains more than 300 observations, which means that stratification is perfectly feasible. We can therefore split the dataset into train and test sets, save them, and continue the preprocessing steps using only the training dataset. The same transformations will later be replicated on the test and OOT datasets.

from sklearn.model_selection import train_test_split

train_test_df["def_year"] = train_test_df["def"].astype(str) + "_" + train_test_df["year"].astype(str)

train_df, test_df = train_test_split(train_test_df, test_size=0.2, random_state=42, stratify=train_test_df["def_year"])

# sauvegarde des bases

train_df.to_csv("train_data.csv", index=False)

test_df.to_csv("test_data.csv", index=False)

oot_df.to_csv("oot_data.csv", index=False)

In the following sections, all analyses are performed using the training data.

Outlier Treatment

We begin by identifying and treating outliers, and we validate these treatments with domain experts. In practice, this step is easier for experts to assess than missing value imputation. Experts often know the plausible ranges of variables, but they may not always know why a value is missing. Performing this step first also helps reduce the bias that extreme values could introduce during the imputation process.

To treat extreme values, we use the IQR method (Interquartile Range method). This method is commonly used for variables that approximately follow a normal distribution. Before applying any treatment, it is important to visualize the distributions using boxplots and density plots.

In our dataset, we have six continuous variables. Their boxplots and density plots are shown below.

The table below presents, for each variable, the lower bound and upper bound, defined as:

Lower Bound = Q1 – 1.5 x IQR

Upper Bound = Q3 + 1.5 x IQR

where IQR = Q3 – Q1 and (Q1) and (Q3) correspond to the first and third quartiles, respectively.

In this study, this treatment method is reasonable because it does not significantly alter the central tendency of the variables. To further validate this approach, we can refer to the previous article and examine which quantile ranges the lower and upper bounds fall into, and analyze the default rate of borrowers within these intervals.

When treating outliers, it is important to proceed carefully. The objective is to reduce the influence of extreme values without changing the scope of the study.

From the table above, we observe that the IQR method would cap the age of borrowers at 51 years. This result is acceptable only if the study population was originally defined with a maximum age of 51. If this restriction was not part of the initial scope, the threshold should be discussed with domain experts to determine a reasonable upper bound for the variable.

Suppose, for example, that borrowers up to 60 years old are considered part of the portfolio. In that case, the IQR method would not be appropriate for treating outliers in the person_age variable, because it would artificially truncate valid observations.

Two alternatives can then be considered. First, domain experts may specify a maximum plausible age, such as 100 years, which would define the acceptable range of the variable. Another approach is to use a technique called winsorization.

Winsorization follows a similar idea to the IQR method: it limits the range of a continuous variable, but the bounds are typically defined using extreme quantiles or expert-defined thresholds. A common approach is to restrict the variable to a range such as:

Observations falling outside this restricted range are then replaced by the closest boundary value (the corresponding quantile or a value determined by experts).

This approach can be applied in two ways:

- Unilateral winsorization, where only one side of the distribution is capped.

- Bilateral winsorization, where both the lower and upper tails are truncated.

In this example, all observations with values below €6 are replaced with €6 for the variable of interest. Similarly, all observations with values above €950 are replaced with €950.

We compute the 90th, 95th, and 99th percentiles of the person_age variable to confirm whether the IQR method is appropriate. If not, we would use the 99th percentile as the upper bound for a winsorization approach.

In this case, the 99th percentile is equal to the IQR upper bound (51). This confirms that the IQR method is appropriate for treating outliers in this variable.

def apply_iqr_bounds(train, test, oot, variables):

train = train.copy()

test = test.copy()

oot = oot.copy()

bounds = []

for var in variables:

Q1 = train[var].quantile(0.25)

Q3 = train[var].quantile(0.75)

IQR = Q3 - Q1

lower = Q1 - 1.5 * IQR

upper = Q3 + 1.5 * IQR

bounds.append({

"Variable": var,

"Lower Bound": lower,

"Upper Bound": upper

})

for df in [train, test, oot]:

df[var] = df[var].clip(lower, upper)

bounds_table = pd.DataFrame(bounds)

return bounds_table, train, test, oot

bounds_table, train_clean_outlier, test_clean_outlier, oot_clean_outlier = apply_iqr_bounds(

train_df,

test_df,

oot_df,

variables

)Another approach that can often be useful when dealing with outliers in continuous variables is discretization, which I will discuss in a future article.

Imputing Missing Values

The dataset contains two variables with missing values: loan_int_rate and person_emp_length. In the training dataset, the distribution of missing values is summarized in the table below.

The fact that only two variables contain missing values allows us to analyze them more carefully. Instead of immediately imputing them with a simple statistic such as the mean or the median, we first try to understand whether there is a pattern behind the missing observations.

In practice, when dealing with missing data, the first step is often to consult domain experts. They may provide insights into why certain values are missing and suggest reasonable ways to impute them. This helps us better understand the mechanism generating the missing values before applying statistical tools.

A simple way to explore this mechanism is to create indicator variables that take the value 1 when a variable is missing and 0 otherwise. The idea is to check whether the probability that a value is missing depends on the other observed variables.

Case of the Variable person_emp_length

The figure below shows the boxplots of the continuous variables depending on whether person_emp_length is missing or not.

Several differences can be observed. For example, observations with missing values tend to have:

- lower income compared with observations where the variable is observed,

- smaller loan amounts,

- lower interest rates,

- and higher loan-to-income ratios.

These patterns suggest that the missing observations are not randomly distributed across the dataset. To confirm this intuition, we can complement the graphical analysis with statistical tests, such as:

- Kolmogorov–Smirnov or Kruskal–Wallis tests for continuous variables,

- Cramér’s V test for categorical variables.

These analyses would typically show that the probability of a missing value depends on the observed variables. This mechanism is known as MAR (Missing At Random).

Under MAR, several imputation methods can be considered, including machine learning approaches such as k-nearest neighbors (KNN).

However, in this article, we adopt a conservative imputation strategy, which is commonly used in credit scoring. The idea is to assign missing values to a category associated with a higher probability of default.

In our previous analysis, we observed that borrowers with the highest default rate belong to the first quartile of employment length, corresponding to customers with less than two years of employment history. To remain conservative, we therefore assign missing values for person_emp_length to 0, meaning no employment history.

Case of the Variable loan_int_rate

When we analyze the relationship between loan_int_rate and the other continuous variables, the graphical analysis suggests no clear differences between observations with missing values and those without.

In other words, borrowers with missing interest rates appear to behave similarly to the rest of the population in terms of the other variables. This observation can also be confirmed using statistical tests.

This type of mechanism is usually referred to as MCAR (Missing Completely At Random). In this case, the missingness is independent of both the observed and unobserved variables.

When the missing data mechanism is MCAR, a simple imputation strategy is generally sufficient. In this study, we choose to impute the missing values of loan_int_rate using the median, which is robust to extreme values.

If you would like to explore missing value imputation techniques in more depth, I recommend reading this article.

The code below shows how to impute the train, test, and OOT datasets while preserving the independence between them. This approach ensures that all imputation parameters are computed using the training dataset only and then applied to the other datasets. By doing so, we limit potential biases that could otherwise affect the model’s ability to generalize to new data.

def impute_missing_values(train, test, oot,

emp_var="person_emp_length",

rate_var="loan_int_rate",

emp_value=0):

"""

Impute missing values using statistics computed on the training dataset.

Parameters

----------

train, test, oot : pandas.DataFrame

Datasets to process.

emp_var : str

Variable representing employment length.

rate_var : str

Variable representing interest rate.

emp_value : int or float

Value used to impute employment length (conservative strategy).

Returns

-------

train_imp, test_imp, oot_imp : pandas.DataFrame

Imputed datasets.

"""

# Copy datasets to avoid modifying originals

train_imp = train.copy()

test_imp = test.copy()

oot_imp = oot.copy()

# ----------------------------

# Compute statistics on TRAIN

# ----------------------------

rate_median = train_imp[rate_var].median()

# ----------------------------

# Create missing indicators

# ----------------------------

for df in [train_imp, test_imp, oot_imp]:

df[f"{emp_var}_missing"] = df[emp_var].isnull().astype(int)

df[f"{rate_var}_missing"] = df[rate_var].isnull().astype(int)

# ----------------------------

# Apply imputations

# ----------------------------

for df in [train_imp, test_imp, oot_imp]:

df[emp_var] = df[emp_var].fillna(emp_value)

df[rate_var] = df[rate_var].fillna(rate_median)

return train_imp, test_imp, oot_imp

## Application de l'imputation

train_imputed, test_imputed, oot_imputed = impute_missing_values(

train=train_clean_outlier,

test=test_clean_outlier,

oot=oot_clean_outlier,

emp_var="person_emp_length",

rate_var="loan_int_rate",

emp_value=0

)We have now treated both outliers and missing values. To keep the article focused and avoid making it long, we will stop here and move on to the conclusion. At this stage, the train, test, and OOT datasets can be safely saved.

train_imputed.to_csv("train_imputed.csv", index=False)

test_imputed.to_csv("test_imputed.csv", index=False)

oot_imputed.to_csv("oot_imputed.csv", index=False)In the next article, we will analyze correlations among variables to perform robust variable selection. We will also introduce the discretization of continuous variables and study two important properties for credit scoring models: monotonicity and stability over time.

Conclusion

This article is part of a series dedicated to building credit scoring models that are both robust and stable over time.

In this article, we highlighted the importance of handling outliers and missing values during the preprocessing stage. Properly treating these issues helps prevent biases that could otherwise distort the model and reduce its ability to generalize to new borrowers.

To preserve this generalization capability, all preprocessing steps must be calibrated using only the training dataset, while maintaining strict independence from the test and out-of-time (OOT) datasets. Once the transformations are defined on the training data, they must then be replicated exactly on the test and OOT datasets.

In the next article, we will analyze the relationships between the target variable and the explanatory variables, following the same methodological principle, that is, preserving the independence between the train, test, and OOT datasets.

Image Credits

All images and visualizations in this article were created by the author using Python (pandas, matplotlib, seaborn, and plotly) and excel, unless otherwise stated.

References

[1] Lorenzo Beretta and Alessandro Santaniello.

Nearest Neighbor Imputation Algorithms: A Critical Evaluation.

National Library of Medicine, 2016.

[2] Nexialog Consulting.

Traitement des données manquantes dans le milieu bancaire.

Working paper, 2022.

[3] John T. Hancock and Taghi M. Khoshgoftaar.

Survey on Categorical Data for Neural Networks.

Journal of Big Data, 7(28), 2020.

[4] Melissa J. Azur, Elizabeth A. Stuart, Constantine Frangakis, and Philip J. Leaf.

Multiple Imputation by Chained Equations: What Is It and How Does It Work?

International Journal of Methods in Psychiatric Research, 2011.

[5] Majid Sarmad.

Robust Data Analysis for Factorial Experimental Designs: Improved Methods and Software.

Department of Mathematical Sciences, University of Durham, England, 2006.

[6] Daniel J. Stekhoven and Peter Bühlmann.

MissForest—Non-Parametric Missing Value Imputation for Mixed-Type Data.Bioinformatics, 2011.

[7] Supriyanto Wibisono, Anwar, and Amin.

Multivariate Weather Anomaly Detection Using the DBSCAN Clustering Algorithm.

Journal of Physics: Conference Series, 2021.

Data & Licensing

The dataset used in this article is licensed under the Creative Commons Attribution 4.0 International (CC BY 4.0) license.

This license allows anyone to share and adapt the dataset for any purpose, including commercial use, provided that proper attribution is given to the source.

For more details, see the official license text: CC0: Public Domain.

Disclaimer

Any remaining errors or inaccuracies are the author’s responsibility. Feedback and corrections are welcome.