Introduction

, we regularly encounter prediction problems where the outcome has an unusual distribution: a large mass of zeros combined with a continuous or count distribution for positive values. If you’ve worked in any customer-facing domain, you’ve almost certainly run into this. Think about predicting customer spending. In any given week, the vast majority of users on your platform don’t purchase anything at all, but the ones who do might spend anywhere from \$5 to \$5,000. Insurance claims follow a similar pattern: most policyholders don’t file anything in a given quarter, but the claims that do come in vary enormously in size. You see the same structure in loan prepayments, employee turnover timing, ad click revenue, and countless other business outcomes.

The instinct for most teams is to reach for a standard regression model and try to make it work. I’ve seen this play out multiple times. Someone fits an OLS model, gets negative predictions for half the customer base, adds a floor at zero, and calls it a day. Or they try a log-transform, run into the $\log(0)$ problem, tack on a $+1$ offset, and hope for the best. These workarounds might work, but they gloss over a fundamental issue: the zeros and the positive values in your data are often generated by completely different processes. A customer who will never buy your product is fundamentally different from a customer who buys occasionally but happened not to this week. Treating them the same way in a single model forces the algorithm to compromise on both groups, and it usually does a poor job on each.

The two-stage hurdle model provides a more principled solution by decomposing the problem into two distinct questions.

First, will the outcome be zero or positive?

And second, given that it’s positive, what will the value be?

By separating the “if” from the “how much,” we can use the right tools on each sub-problem independently with different algorithms, different features, and different assumptions, then combine the results into a single prediction.

In this article, I’ll walk through the theory behind hurdle models, provide a working Python implementation, and discuss the practical considerations that matter when deploying these models in production.

Interested readers who are already familiar with the motivation can skip straight to the implementation section.

The Problem with Standard Approaches

Why Not Just Use Linear Regression? To make this concrete, consider predicting customer spend.

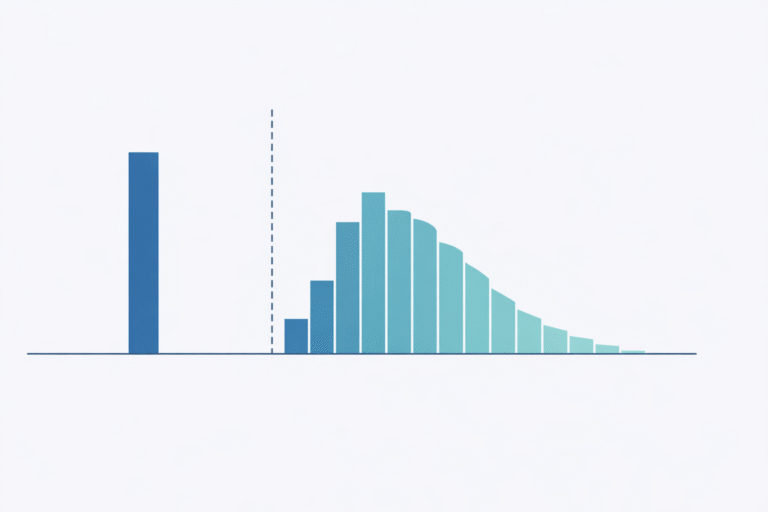

If 80% of customers spend zero and the remaining 20% spend between 10 and 1000 dollars, a linear regression model immediately runs into trouble.

The model can (and will) predict negative spend for some customers, which is nonsensical since you can’t spend negative dollars.

It will also struggle at the boundary: the massive spike at zero pulls the regression line down, causing the model to underpredict zeros and overpredict small positive values simultaneously.

The variance structure is also wrong.

Customers who spend nothing have zero variance by definition, while customers who do spend have high variance.

While you can use heteroskedasticity-robust standard errors to get valid inference despite non-constant variance, that only fixes the standard errors and doesn’t fix the predictions themselves.

The fitted values are still coming from a linear model that’s trying to average over a spike at zero and a right-skewed positive distribution, which is a poor fit regardless of how you compute the confidence intervals.

Why Not Log-Transform? The next thing most people try is a log-transform: $\log(y + 1)$ or $\log(y + \epsilon)$.

This compresses the right tail and makes the positive values look more normal, but it introduces its own set of problems.

The choice of offset ($1$ or $\epsilon$) is arbitrary, and your predictions will change depending on what you pick.

When you back-transform via $\exp(\hat{y}) – 1$, you introduce a systematic bias due to Jensen’s inequality, since the expected value of the exponentiated prediction is not the same as the exponentiation of the expected prediction.

More fundamentally, the model still doesn’t distinguish between a customer who never spends and one who sometimes spends but happened to be zero this period.

Both get mapped to $\log(0 + 1) = 0$, and the model treats them identically even though they represent very different customer behaviors.

What This Means for Forecasting. The deeper issue with forcing a single model onto zero-inflated data goes beyond poor point estimates.

When you ask one model to describe two fundamentally different behaviors (not engaging at all vs. engaging at varying intensities), you end up with a model that conflates the drivers of each.

The features that predict whether a customer will purchase at all are often quite different from the features that predict how much they’ll spend given a purchase.

Recency and engagement frequency might dominate the “will they buy” question, while income and product category preferences matter more for the “how much” question.

A single regression mixes these signals together, making it difficult to disentangle what’s actually driving the forecast.

This also has practical implications for how you act on the model.

If your forecast is low for a particular customer, is it because they’re unlikely to purchase, or because they’re likely to purchase but at a small amount?

The optimal business response to each scenario is different.

You might send a re-engagement campaign for the first case and an upsell offer for the second.

A single model gives you one number, but there is no way to tell which lever to pull.

The Two-Stage Hurdle Model

Conceptual Framework. The core idea behind hurdle models is surprisingly intuitive.

Zeros and positives often arise from different data-generating processes, so we should model them separately.

Think of it as two sequential questions your model needs to answer.

First, does this customer cross the “hurdle” and engage at all?

And second, given that they’ve engaged, how much do they spend?

Formally, we can write the distribution of the outcome $Y$ conditional on features $X$ as:

$$ P(Y = y | X) = \begin{cases} 1 – \pi(X) & \text{if } y = 0 \\ \pi(X) \cdot f(y | X, y > 0) & \text{if } y > 0 \end{cases} $$

Here, $\pi(X)$ is the probability of crossing the hurdle (having a positive outcome), and $f(y | X, y > 0)$ is the conditional distribution of $y$ given that it’s positive.

The beauty of this formulation is that these two components can be modeled independently.

You can use a gradient boosting classifier for the first stage and a gamma regression for the second, or logistic regression paired with a neural network, or any other combination that suits your data.

Each stage gets its own feature set, its own hyperparameters, and its own evaluation metrics.

This modularity is what makes hurdle models so practical in production settings.

Stage 1: The Classification Model. The first stage is a straightforward binary classification problem: predict whether $y > 0$.

You’re training on the full dataset, with every observation labeled as either zero or positive.

This is a problem that the ML community has decades of tooling for.

Logistic regression gives you an interpretable and fast baseline.

Gradient boosting methods like XGBoost or LightGBM handle non-linearities and feature interactions well.

Neural networks work when you have high-dimensional or unstructured features.

The output from this stage is $\hat{\pi}(X) = P(Y > 0 | X)$, a calibrated probability that the outcome will be positive.

The important thing to get right here is calibration.

Since we’re going to multiply this probability by the conditional amount in the next stage, we need $\hat{\pi}(X)$ to be a true probability, not just a score that ranks well.

If your classifier outputs probabilities that are systematically too high or too low, the combined prediction will inherit that bias.

Platt scaling can help if your base classifier isn’t well-calibrated out of the box.

Stage 2: The Conditional Regression Model. The second stage predicts the value of $y$ conditional on $y > 0$.

This is where the hurdle model shines compared to standard approaches because you’re training a regression model exclusively on the positive subset of your data, so the model never has to deal with the spike at zero.

This means you can use the full range of regression techniques without worrying about how they handle zeros.

The choice of model for this stage depends heavily on the shape of your positive outcomes.

If $\log(y | y > 0)$ is approximately normal, you can use OLS on the log-transformed target (with appropriate bias correction on back-transformation, which we’ll cover below).

For right-skewed positive continuous outcomes, a GLM with a gamma family is a natural choice.

If you’re dealing with overdispersed count data, negative binomial regression works well.

An easy method is just to use Autogluon as the ensemble model and not have to worry about the distribution of your data.

The output is $\hat{\mu}(X) = E[Y | X, Y > 0]$, the expected value conditional on the outcome being positive.

Combined Prediction. The final prediction combines both stages multiplicatively:

$$ \hat{E}[Y | X] = \hat{\pi}(X) \cdot \hat{\mu}(X) $$

This gives the unconditional expected value of $Y$, accounting for both the probability that the outcome is positive and the expected magnitude given positivity.

If a customer has a 30% chance of purchasing and their expected spend given a purchase is 100 dollars, then their unconditional expected spend is 30 dollars.

This decomposition also makes business interpretation straightforward.

You can separately obtain feature importance on both the probability of engagement versus what drives the intensity of engagement to see what needs to be addressed.

Implementation

Training Pipeline. The training pipeline is straightforward.

We train Stage 1 on the full dataset with a binary target, then train Stage 2 on only the positive observations with the original continuous target.

At prediction time, we get a probability from Stage 1 and a conditional mean from Stage 2, then multiply them together.

We can implement this in Python using scikit-learn as a starting point.

The following class wraps both stages into a single estimator that follows the scikit-learn API, making it easy to drop into existing pipelines and use with tools like cross-validation and grid search.

import numpy as np

from sklearn.linear_model import LogisticRegression

from sklearn.ensemble import GradientBoostingRegressor

from sklearn.base import BaseEstimator, RegressorMixin

class HurdleModel(BaseEstimator, RegressorMixin):

"""

Two-stage hurdle model for zero-inflated continuous outcomes.

Stage 1: Binary classifier for P(Y > 0)

Stage 2: Regressor for E[Y | Y > 0]

"""

def __init__(self, classifier=None, regressor=None):

self.classifier = classifier or LogisticRegression()

self.regressor = regressor or GradientBoostingRegressor()

def fit(self, X, y):

# Stage 1: Train classifier on all data

y_binary = (y > 0).astype(int)

self.classifier.fit(X, y_binary)

# Stage 2: Train regressor on positive outcomes only

positive_mask = y > 0

if positive_mask.sum() > 0:

X_positive = X[positive_mask]

y_positive = y[positive_mask]

self.regressor.fit(X_positive, y_positive)

return self

def predict(self, X):

# P(Y > 0)

prob_positive = self.classifier.predict_proba(X)[:, 1]

# E[Y | Y > 0]

conditional_mean = self.regressor.predict(X)

# E[Y] = P(Y > 0) * E[Y | Y > 0]

return prob_positive * conditional_mean

def predict_proba_positive(self, X):

"""Return probability of positive outcome."""

return self.classifier.predict_proba(X)[:, 1]

def predict_conditional(self, X):

"""Return expected value given positive outcome."""

return self.regressor.predict(X)Practical Considerations

Feature Engineering. One of the nice properties of this framework is that the two stages can use entirely different feature sets.

In my experience, the features that predict whether someone engages at all are often quite different from the features that predict how much they engage.

For Stage 1, behavioral signals tend to dominate: past activity, recency, frequency, whether the customer has ever purchased before.

Demographic indicators and contextual factors like time of year or day of week also help separate the “will engage” group from the “won’t engage” group.

For Stage 2, intensity signals matter more: historical purchase amounts, spending velocity, capacity indicators like income or credit limit, and product or category preferences.

These features help distinguish the 50 dollar spender from the 500 dollar spender, conditional on both of them making a purchase.

Furthermore, we can use feature boosting by feeding in the output of the stage 1 model into the stage 2 model as an additional feature.

This allows the stage 2 model to learn how the probability of engagement interacts with the intensity signals, which improves performance.

Handling Class Imbalance. If zeros dominate your dataset, say 95% of observations are zero, then Stage 1 faces a class imbalance problem.

This is common in applications like ad clicks or insurance claims.

The standard toolkit applies here: you can tune the classification threshold to optimize for your specific business objective rather than using the default 0.5 cutoff, upweight the minority class during training through sample weights, or apply undersampling to resolve this.

The key is to think carefully about what you’re optimizing for.

In many business settings, you care more about precision at the top of the ranked list than you do about overall accuracy, and tuning your threshold accordingly can make a big difference.

Model Calibration. Since the combined prediction $\hat{\pi}(X) \cdot \hat{\mu}(X)$ is a product of two models, both need to be well-calibrated for the final output to be reliable.

If Stage 1’s probabilities are systematically inflated by 10%, your combined predictions will be inflated by 10% across the board, regardless of how good Stage 2 is.

For Stage 1, check calibration curves and apply Platt scaling if the raw probabilities are off.

For Stage 2, verify that the predictions are unbiased on the positive subset, meaning the mean of your predictions should roughly match the mean of the actuals when evaluated on holdout data where $y > 0$.

I’ve found that calibration issues in Stage 1 are the more common source of problems in practice, especially when extending the classifier to a discrete-time hazard model.

Evaluation Metrics. Evaluating a two-stage model requires thinking about each stage separately and then looking at the combined output.

For Stage 1, standard classification metrics apply: AUC-ROC and AUC-PR for ranking quality, precision and recall at your chosen threshold for operational performance, and the Brier score for calibration.

For Stage 2, you should evaluate only on the positive subset since that’s what the model was trained on.

RMSE and MAE give you a sense of absolute error, MAPE tells you about percentage errors (which matters when your outcomes span several orders of magnitude), and quantile coverage tells you whether your prediction intervals are honest.

For the combined model, look at overall RMSE and MAE on the full test set, but also break it down by whether the true outcome was zero or positive.

A model that looks great on aggregate might be terrible at one end of the distribution.

Lift charts by predicted decile are also useful for communicating model performance to stakeholders who don’t think in terms of RMSE.

When to Use Hurdle vs. Zero-Inflated Models. This is a distinction worth getting right, because hurdle models and zero-inflated models (like ZIP or ZINB) make different assumptions about where the zeros come from.

Hurdle models assume that all zeros arise from a single process, the “non-participation” process.

Once you cross the hurdle, you’re in the positive regime, and the zeros are fully explained by Stage 1.

Zero-inflated models, on the other hand, assume that zeros can come from two sources: some are “structural” zeros (customers who could never be positive, like someone who doesn’t own a car being asked about auto insurance claims), and others are “sampling” zeros (customers who could have been positive but just weren’t this time).

To make this concrete with a retail example: a hurdle model says a customer either decides to shop or doesn’t, and if they shop, they spend some positive amount.

A zero-inflated model says some customers never shop at this store (structural zeros), while others do shop here occasionally but just didn’t today (sampling zeros).

If your zeros genuinely come from two distinct populations, a zero-inflated model is more appropriate.

But in many practical settings, the hurdle framing is both simpler and sufficient, and I’d recommend starting there unless you have a clear reason to believe in two types of zeros.

Extensions and Variations

Multi-Class Hurdle. Sometimes the binary split between zero and positive isn’t granular enough.

If your outcome has multiple meaningful states (say none, small, and large), you can extend the hurdle framework into a multi-class version.

The first stage becomes a multinomial classifier that assigns each observation to one of $K$ buckets, and then separate regression models handle each bucket’s conditional distribution.

Formally, this looks like:

$$ P(Y) = \begin{cases} \pi_0 & \text{if } Y = 0 \\ \pi_1 \cdot f_{\text{small}}(Y) & \text{if } 0 < Y \leq \tau \\ \pi_2 \cdot f_{\text{large}}(Y) & \text{if } Y > \tau \end{cases} $$

This is particularly useful when the positive outcomes themselves have distinct sub-populations.

For instance, in modeling insurance claims, there’s often a clear separation between small routine claims and large catastrophic ones, and trying to fit a single distribution to both leads to poor tail estimates.

The threshold $\tau$ can be set based on domain knowledge or estimated from the data using mixture model techniques.

Generalizing the Stages. One thing worth emphasizing is that neither stage needs to be a specific type of model.

Throughout this article, I’ve presented Stage 1 as a binary classifier, but that’s just the simplest version.

If the timing of the event matters, you could replace Stage 1 with a discrete-choice survival model that predicts not just whether a customer will purchase, but when.

This is especially useful for subscription or retention contexts where the “hurdle” has a temporal dimension.

Similarly, Stage 2 doesn’t have to be a single hand-tuned regression.

You could use an AutoML framework like AutoGluon to ensemble over a large set of candidate models (gradient boosting, neural networks, linear models) and let it find the best combination for predicting the conditional amount.

The hurdle framework is agnostic to what sits inside each stage, so you should feel free to swap in whatever modeling approach best fits your data and use case.

Common Pitfalls

These are mistakes I’ve either made myself or seen others make when deploying hurdle models.

None of them are obvious until you’ve been bitten, so they’re worth reading through even if you’re already comfortable with the framework.

1. Leaking Stage 2 Information into Stage 1. If you engineer features from the target, something like “average historical spend” or “total lifetime value,” you need to be careful about how that information flows into each stage.

A feature that summarizes past spend implicitly contains information about whether the customer has ever spent anything, which means Stage 1 might be getting a free signal that wouldn’t be available at prediction time for new customers.

The fix is to think carefully about the temporal structure of your features and make sure both stages only see information that would be available at the time of prediction.

2. Ignoring the Conditional Nature of Stage 2. This one is subtle but important.

Stage 2 is trained only on observations where $y > 0$, so it should be evaluated only on that subset too.

I’ve seen people compute RMSE across the full test set (including zeros) and conclude that Stage 2 is terrible.

So when you’re reporting metrics for Stage 2, always filter to the positive subset first.

Similarly, when diagnosing issues with the combined model, make sure you decompose the error into its Stage 1 and Stage 2 components.

A high overall error might be driven entirely by poor classification in Stage 1, even if Stage 2 is doing fine on the positive observations.

4. Misaligned Train/Test Splits. Both stages need to use the same train/test splits.

This sounds obvious, but it’s easy to mess up in practice, especially if you’re training the two stages in separate notebooks or pipelines.

If Stage 1 sees a customer in training but Stage 2 sees the same customer in its test set (because you re-split the positive-only data independently), you’ve introduced data leakage.

The simplest fix is to do your train/test split once at the beginning on the full dataset, and then derive the Stage 2 training data by filtering the training fold to positive observations.

If you’re doing cross-validation, the fold assignments must be consistent across both stages.

5.

Assuming Independence Between Stages. While we model the two stages separately, the underlying features and outcomes are often correlated in ways that matter.

Customers with high $\hat{\pi}(X)$ (likely to engage) often also have high $\hat{\mu}(X)$ (likely to spend a lot when they do).

This means the multiplicative combination $\hat{\pi}(X) \cdot \hat{\mu}(X)$ can amplify errors in ways you wouldn’t see if the stages were truly independent.

Keep this in mind when interpreting feature importance.

A feature that shows up as important in both stages is doing double duty, and its total contribution to the combined prediction is larger than either stage’s importance score suggests.

Final Remarks

Alternate Uses: Beyond the examples covered in this article, hurdle models show up in a surprising variety of business contexts.

In marketing, they’re a natural fit for modeling customer lifetime value, where many customers churn before making a second purchase, creating a mass of zeros, while retained customers generate widely varying amounts of revenue.

In healthcare analytics, patient cost modeling follows the same pattern: most patients have zero claims in a given period, but the claims that do come in range from routine office visits to major surgeries.

For demand forecasting with intermittent demand patterns (spare parts, luxury goods, B2B transactions), the two-stage decomposition naturally captures the sporadic nature of purchases and avoids the smoothing artifacts that plague traditional time series methods.

In credit risk, expected loss calculations are inherently a hurdle problem: what’s the probability of default (Stage 1), and what’s the loss given default (Stage 2)?

If you’re working with any outcome where zeros have a fundamentally different meaning than “just a small value,” hurdle models are worth considering as a first approach.

Two-stage hurdle models provide a principled approach to predicting zero-inflated outcomes by decomposing the problem into two conceptually distinct parts: whether an event occurs and what magnitude it takes conditional on occurrence.

This decomposition offers flexibility, since each stage can use different algorithms, features, and tuning strategies.

It offers interpretability, because you can separately analyze and present what drives participation versus what drives intensity, which is often exactly the breakdown that product managers and executives want to see.

And it often delivers better predictive performance than a single model trying to handle both the spike at zero and the continuous positive distribution simultaneously.

The key insight is recognizing that zeros and positive values often arise from different mechanisms, and modeling them separately respects that structure rather than fighting against it.

While this article covers the core framework, we haven’t touched on several other important extensions that deserve their own treatment.

Bayesian formulations of hurdle models can incorporate prior knowledge and provide natural uncertainty quantification, which would tie in nicely with our hierarchical Bayesian series.

Imagine estimating product-level hurdle models where products with sparse data borrow strength from their category.

Deep learning approaches open up the possibility of using unstructured features (text, images) in either stage.

If you have the opportunity to apply hurdle models in your own work, I’d love to hear about it!

Please do not hesitate to reach out with questions, insights, or stories through my email or LinkedIn.

If you have any feedback on this article, or would like to request another topic in causal inference/machine learning, please also feel free to reach out.

Thank you for reading!