: The Midnight Paradox

Imagine this. You’re building a model to predict electricity demand or taxi pickups. So, you feed it time (such as minutes) starting at midnight. Clean and simple. Right?

Now your model sees 23:59 (minute 1439 in the day) and 00:01 (minute 1 in the day). To you, they’re two minutes apart. To your model, they’re very far apart. That’s the midnight paradox. And yes, your model is probably time-blind.

Why does this happen?

Because most machine learning models treat numbers as straight lines, not circles.

Linear regression, KNN, SVMs, and even neural networks will treat numbers logically, assuming higher numbers are “more” than lower ones. They don’t know that time wraps around. Midnight is the edge case they never forgive.

If you’ve ever added hourly information to your model without success, wondering later why your model struggles around day boundaries, this is likely why.

The Failure of Standard Encoding

Let’s talk about the usual approaches. You’ve probably used at least one of them.

You encode hours as numbers from 0 to 23. Now there’s an artificial cliff between hour 23 and hour 0. Thus, this model thinks midnight is the biggest jump of the day. However, is midnight really more different from 11 PM than 10 PM is from 9 PM?

Of course not. But your model doesn’t know that.

Here’s the hours representation when they’re in the “linear” mode.

# Generate data

date_today = pd.to_datetime('today').normalize()

datetime_24_hours = pd.date_range(start=date_today, periods=24, freq='h')

df = pd.DataFrame({'dt': datetime_24_hours})

df['hour'] = df['dt'].dt.hour

# Calculate Sin and Cosine

df["hour_sin"] = np.sin(2 * np.pi * df["hour"] / 24)

df["hour_cos"] = np.cos(2 * np.pi * df["hour"] / 24)

# Plot the Hours in Linear mode

plt.figure(figsize=(15, 5))

plt.plot(df['hour'], [1]*24, linewidth=3)

plt.title('Hours in Linear Mode')

plt.xlabel('Hour')

plt.xticks(np.arange(0, 24, 1))

plt.ylabel('Value')

plt.show()

What if we one-hot encode the hours? Twenty-four binary columns. Problem solved, right? Well… partially. You fixed the artificial gap, but you lost proximity. 2 AM is no longer closer to 3 AM than to 10 PM.

You also exploded dimensionality. For trees, that’s annoying. For linear models, it’s probably inefficient.

So, let’s move on to a feasible alternative.

- The Solution: Trigonometric Mapping

Here’s the mindset shift:

Stop thinking about time as a line. Think about it as a circle.

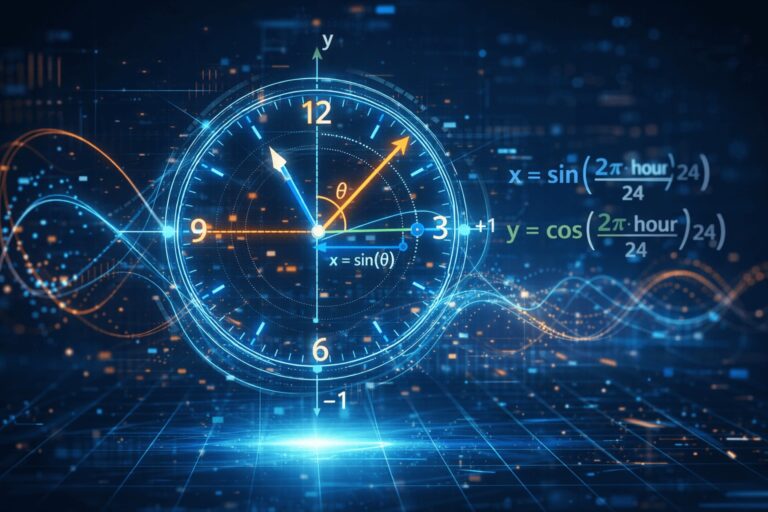

A 24-hour day loops back to itself. So your encoding should loop too, thinking in circles. Each hour is an evenly spaced point on a circle. Now, to represent a point on a circle, you don’t use one number, but instead you use two coordinates: x and y.

That’s where sine and cosine come in.

The geometry behind it

Every angle on a circle can be mapped to a unique point using sine and cosine. This gives your model a smooth, continuous representation of time.

plt.figure(figsize=(5, 5))

plt.scatter(df['hour_sin'], df['hour_cos'], linewidth=3)

plt.title('Hours in Cyclical Mode')

plt.xlabel('Hour')

Here’s the math formula to calculate cycles for hours of the day:

- First,

2 * π * hour / 24converts each hour into an angle. Midnight and 11 PM end up almost at the same position on the circle. - Then sine and cosine project that angle into two coordinates.

- Those two values together uniquely define the hour. Now 23:00 and 00:00 are close in feature space. Exactly what you wanted all along.

The same idea works for minutes, days of the week, or months of the year.

Code

Let’s experiment with this dataset Appliances Energy Prediction [4]. We will try to improve the prediction using a Random Forest Regressor model (a tree-based model).

Candanedo, L. (2017). Appliances Energy Prediction [Dataset]. UCI Machine Learning Repository. https://doi.org/10.24432/C5VC8G. Creative Commons 4.0 License.

# Imports

from sklearn.ensemble import RandomForestRegressor

from sklearn.model_selection import train_test_split

from sklearn.metrics import root_mean_squared_error

from ucimlrepo import fetch_ucirepo Get data.

# fetch dataset

appliances_energy_prediction = fetch_ucirepo(id=374)

# data (as pandas dataframes)

X = appliances_energy_prediction.data.features

y = appliances_energy_prediction.data.targets

# To Pandas

df = pd.concat([X, y], axis=1)

df['date'] = df['date'].apply(lambda x: x[:10] + ' ' + x[11:])

df['date'] = pd.to_datetime(df['date'])

df['month'] = df['date'].dt.month

df['day'] = df['date'].dt.day

df['hour'] = df['date'].dt.hour

df.head(3)Let’s create a quick model with the linear time first, as our baseline for comparison.

# X and y

# X = df.drop(['Appliances', 'rv1', 'rv2', 'date'], axis=1)

X = df[['hour', 'day', 'T1', 'RH_1', 'T_out', 'Press_mm_hg', 'RH_out', 'Windspeed', 'Visibility', 'Tdewpoint']]

y = df['Appliances']

# Train Test Split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# Fit the model

lr = RandomForestRegressor().fit(X_train, y_train)

# Score

print(f'Score: {lr.score(X_train, y_train)}')

# Test RMSE

y_pred = lr.predict(X_test)

rmse = root_mean_squared_error(y_test, y_pred)

print(f'RMSE: {rmse}')The results are here.

Score: 0.9395797670166536

RMSE: 63.60964667197874Next, we will encode the cyclical time components (day and hour) and retrain the model.

# Add cyclical hours sin and cosine

df['hour_sin'] = np.sin(2 * np.pi * df['hour'] / 24)

df['hour_cos'] = np.cos(2 * np.pi * df['hour'] / 24)

df['day_sin'] = np.sin(2 * np.pi * df['day'] / 31)

df['day_cos'] = np.cos(2 * np.pi * df['day'] / 31)

# X and y

X = df[['hour_sin', 'hour_cos', 'day_sin', 'day_cos','T1', 'RH_1', 'T_out', 'Press_mm_hg', 'RH_out', 'Windspeed', 'Visibility', 'Tdewpoint']]

y = df['Appliances']

# Train Test Split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# Fit the model

lr_cycle = RandomForestRegressor().fit(X_train, y_train)

# Score

print(f'Score: {lr_cycle.score(X_train, y_train)}')

# Test RMSE

y_pred = lr_cycle.predict(X_test)

rmse = root_mean_squared_error(y_test, y_pred)

print(f'RMSE: {rmse}')And the results. We are seeing an improvement of 1% in the score and 1 point in the RMSE.

Score: 0.9416365489096074

RMSE: 62.87008070927842I am sure this does not look like much, but let’s remember that this toy example is using a simple out-of-the-box model without any data treatment or cleanup. We are seeing mostly the effect of the sine and cosine transformation.

What’s really happening here is that, in real life, electricity demand doesn’t reset at midnight. And now your model finally sees that continuity.

Why You Need Both Sine and Cosine

Don’t fall into the temptation of using only sine, as it feels enough. One column instead of two. Cleaner, right?

Unfortunately, it breaks symmetry. On a 24-hour clock, 6 AM and 6 PM can produce the same sine value. Different times with identical encoding can be bad because the model now confuses morning rush hour with evening rush hour. Thus, not ideal unless you enjoy confused predictions.

Using both sine and cosine fixes this. Together, they give each hour a unique fingerprint on the circle. Think of it like latitude and longitude. You need both to know where you are.

Real-World Impact & Results

So, does this actually help models? Yes. Especially certain ones.

Distance-based models

KNN and SVMs rely heavily on distance calculations. Cyclical encoding prevents fake “long distances” at boundaries. Your neighbors actually become neighbors again.

Neural networks

Neural networks learn faster with smooth feature spaces. Cyclical encoding removes sharp discontinuities at midnight. That usually means faster convergence and better stability.

Tree-based models

Gradient Boosted Trees like XGBoost or LightGBM can eventually learn these patterns. Cyclical encoding gives them a head start. If you care about performance and interpretability, it’s worth it.

7. When Should You Use This?

Always ask yourself the question: Does this feature repeat in a cycle? If yes, consider cyclical encoding.

Common examples are:

- Hour of day

- Day of week

- Month of year

- Wind direction (degrees)

- If it loops, you might try encoding it like a loop.

Before You Go

Time is not just a number. It’s a coordinate on a circle.

If you treat it like a straight line, your model can stumble at boundaries and have a hard time understanding that variable as a cycle, something that repeats and has a pattern.

Cyclical encoding with sine and cosine fixes this elegantly, preserving proximity, reducing artifacts, and helping models learn faster.

So next time your predictions look weird around day changes, try this new tool you’ve learned, and let it make your model shine as it should.

If you liked this content, find more of my work and my contacts at my website.

GitHub Repository

Here’s the whole code of this exercise.

https://github.com/gurezende/Time-Series/tree/main/Sine%20Cosine%20Time%20Encode

References & Further Reading

[1. Encoding hours Stack Exchange]: https://stats.stackexchange.com/questions/451295/encoding-cyclical-feature-minutes-and-hours

[2. NumPy trigonometric functions]: https://numpy.org/doc/stable/reference/routines.math.html

[3. Practical discussion on cyclical features]:

https://www.kaggle.com/code/avanwyk/encoding-cyclical-features-for-deep-learning

[4. Appliances Energy Prediction Dataset] https://archive.ics.uci.edu/dataset/374/appliances+energy+prediction